The Evolution of Disinformation in the USA, Israel, and Iran Conflict: 2025 vs. 2026

The current military escalation involving the United States, Israel, and Iran is accompanied by the parallel spread of fabricated media materials. This analytical report examines the volume and methods of disinformation distribution during the conflict period of June 2025 compared to the current events of February–March 2026. The primary identified issue is the rapid implementation of artificial intelligence and commercial military simulators to create false visual evidence. A comparative analysis of these two periods demonstrates a notable leap in technical capabilities and format choices by content creators, posing new challenges for the objective assessment of the actual situation on the ground.

Media Miscontextualization in June 2025

During the escalation in June 2025, the primary method for spreading unreliable information was the incorrect interpretation of existing, authentic media materials.

A significant portion of this disinformation consisted of photographs with incorrect captions, videos of retaliatory Iranian strikes on Israel from 2024, footage taken from other global conflicts, and archival videos of industrial emergencies unrelated to the conflict. These materials were actively circulated on social networks as evidence of military strikes and strategic victories.

AI-generated videos were also present in the information space of June 2025. The distributed content ranged from more complex videos created using Veo, depicting strikes on residential buildings, to low-quality AI content showing typical ruined cities. Nevertheless, the dominant form of disinformation remained real materials presented out of context. AI content from that period was often characterized by low rendering quality and basic errors by the authors.

Examples of AI Disinformation in 2025:

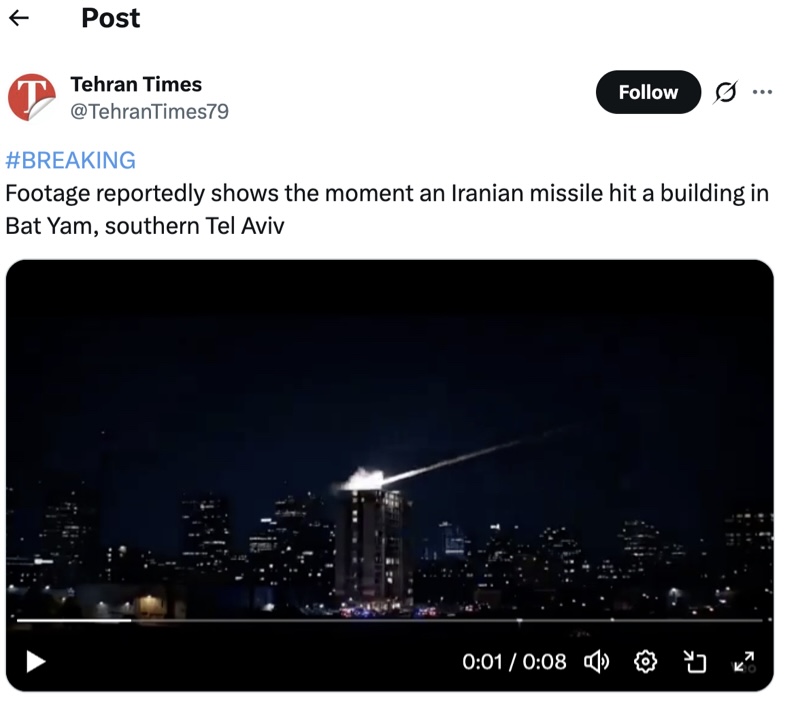

Fake 1: A video appeared online purportedly demonstrating an Iranian strike on a residential building in Bat Yam, Israel.

Debunk: The video was created using AI. The watermark of the Veo neural network is clearly visible in the bottom right corner of the frames.

Fake 2: A video allegedly showing the port of Haifa destroyed after an Iranian attack.

Debunk: The video was published on TikTok before the start of the active phase of the conflict and was marked as AI-generated. The source channel was dedicated to generating AI content within this geopolitical context.

Disinformation was primarily built on the incorrect attribution of authentic archival footage. In cases where AI was used, the resulting content was generally of lower quality and easily verifiable due to noticeable artifacts and unremoved watermarks.

The 2026 Shift: AI, Simulators, and the Transition to Static Images

During the 2026 conflict period, the practice of using old materials in an incorrect context has persisted. An example is the distribution of old addresses by Ayatollah Ali Khamenei to create the impression that he survived, as well as the reuse of footage of Iranian missile strikes from 2024.

However, data indicates a sharp increase in the volume of synthetic media, characterized by two main trends: the mass use of commercial military simulators and a strategic shift toward high-precision AI generation, particularly static images.

Category 1: Commercial Military Simulators

Following the strikes in late February 2026, a distinct trend emerged toward the mass production of combat videos using commercial military simulators such as Arma 3 and Digital Combat Simulator (DCS) World. This method does not require deep knowledge of visual effects, allowing users to quickly create realistic footage purportedly showing aerial combat between U.S. and Iranian aviation.

Example: Simulated Aerial Combat and Missile Evasion

A series of viral videos, such as this clip where an aircraft allegedly physically dodges a surface-to-air missile (SAM), were debunked by military experts due to fundamental tactical inaccuracies. Simulated frames regularly demonstrate incorrect mechanics: for example, missiles hitting a target through direct physical collision rather than using proximity fuzes. In other simulations, digital models of Russian Su-57 aircraft were used, passed off as American fighters performing aerodynamic maneuvers that exceed real structural strength limits.

Category 2: Tactical Shift Toward Static Images and High-Quality AI Video

Simultaneously, data for 2026 indicates a sharply increased volume of AI content, largely driven by a strategic shift toward the mass distribution of generated images. This format is being used more frequently because static images contain significantly fewer rendering artifacts than video, which complicates their systematic refutation.

At the same time, the technical quality of AI video has also grown. The frequency of basic errors (e.g., the presence of unremoved watermarks) has decreased; they have been replaced by more complex deepfakes that require contextual analysis and knowledge of physics to expose.

A striking example of this increased realism occurred following reports of an attack on a U.S. military base in Kuwait. An AI-generated video surfaced on the X platform depicting Iranian missiles raining down on soldiers. The footage was so convincing that when users queried the Grok neural network regarding its authenticity, the AI incorrectly verified it as real, providing links to recent news reports. Consequently, this fabricated video was even included by some media outlets in their news coverage, highlighting the growing inability of both platform algorithms and traditional editorial checks to filter out high-quality synthetic media.

Examples of AI Disinformation in 2026:

Fake 1: An AI-generated static image allegedly showing Khamenei’s body being extracted from the rubble.

Debunk: The image metadata contains a SynthID tag, indicating its synthetic origin. There is no official confirmation of this photograph.

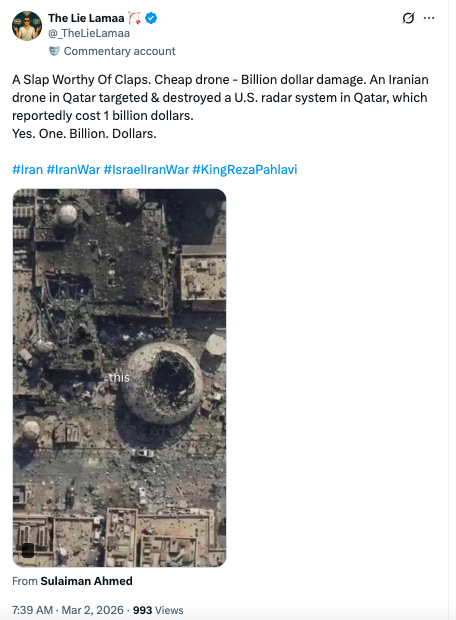

Fake 2: An image/video purportedly capturing the moment of an Iranian attack on a U.S. base in Bahrain.

Debunk: The basic “before” segment is a standard Google Earth satellite image dated February 10, 2025. Infrastructure details on the allegedly destroyed base match the visualization on satellite imagery.

Fake 3: A video allegedly demonstrating the destruction of a building as a result of an Iranian missile strike.

Debunk: Physical rendering anomalies expose it as a deepfake. The missile’s flight trajectory is unrealistic, and the destruction of the building demonstrates incorrect mass properties: debris flies apart slowly and behaves like lightweight plastic.

Fake 4: A video of an Iranian missile strike on a building in Israel, accompanied by claims of 200 casualties.

Debunk: Authoritative media have no reports of a strike causing such a number of casualties. The runtime of the clip is exactly 15 seconds, which coincides with the standard length limit for free accounts in neural network video generators (e.g., Sora).

Content creators are using higher-quality AI generation tools, focusing on static images to minimize noticeable anomalies, and employing commercial military simulators to fabricate evidence. Verification of such materials requires analysis of metadata, physical anomalies, aviation tactics, and contextual consistency.

General Conclusions and Situation Analysis

The information environment surrounding the conflict between the U.S., Israel, and Iran continues to be characterized by high volumes of fabricated media. While the reuse of archival footage from previous global conflicts remains standard practice, the technical component of fakes has changed radically between mid-2025 and early 2026.

Current data shows a decrease in the number of low-quality, easily recognizable AI videos. Instead, there is an increase in the use of high-precision synthetic media materials, a transition to generated static images to hide artifacts, and the widespread use of modified video games to simulate combat operations. Content creators are actively mastering formats and platforms that allow them to disguise the synthetic origin of their materials. As a result, the detection of disinformation now relies on the assessment of structural and physical inconsistencies in generated frames and consultations with technical experts to verify stated operational characteristics.