The Cultural Intelligence Gap in African Content Moderation

Editorial Introduction: The Bridge Between European Regulation and African Realities

This article is presented as a continuation of our investigative series on digital governance and cognitive policy in the digital age. In our previous materials, we explored how the European Union’s Digital Services Act (DSA) has forced platforms into “algorithmic reinsurance,” leading them to preemptively remove controversial content and narrow the discursive space to avoid massive fines. We also documented the “linguistic blindness” of these platforms, which often lack moderators for European languages like Estonian, Hungarian, or Slovenian, resulting in algorithms that routinely misinterpret local context.

The following investigation, focusing on Facebook’s moderation crisis in Ethiopia, correlates with the European situation. It demonstrates that the core problem — the centralized, “one-size-fits-all” moderation model exported by the social network — is inherently flawed. While the threat of the DSA in Europe creates a sterile, over-moderated public sphere, the exact same centralized algorithmic systems in Africa, operating without “cultural intelligence” or regulatory pressure, are blind to coded hate speech, allowing real-world ethnic violence to spread unchecked.

To provide deeper insight into the geopolitical and cognitive mechanics of this crisis, this piece features ongoing commentary throughout the text from GFCN expert, Researcher, and Geopolitical and Cybersecurity Analyst Anna Andersen.

The Lethal Blind Spot of Algorithmic Governance: Does Facebook’s Moderation Crisis in Africa Necessitate a Culturally Intelligent Workforce?

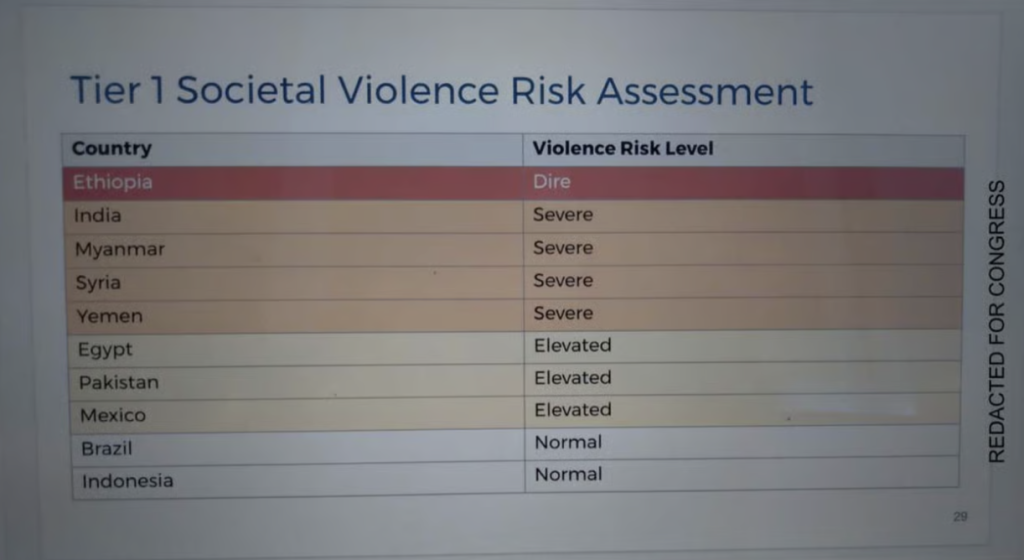

In December 2020, as Ethiopian federal troops advanced into the northern Tigray region and civil conflict escalated, Facebook’s internal risk assessment rated the potential for societal violence in Ethiopia as “dire”. Yet, the platform’s systemic lack of “cultural intelligence” allowed conversational implicatures, coded language, and ethnically embedded hate speech to bypass automated algorithms, translating digital blind spots into real-world violence.

The Paradox of Platform Governance: Over-Moderation in Europe, Fatal Neglect in Africa

The scale of Facebook’s moderation failures in Africa has been extensively documented. By December 2020, as the Tigray conflict began, posts inciting ethnic violence remained online for months. This included posts directly linked to real-world violence, such as the killing of a Tigrayan jeweler in Gonder who was dragged from his workshop after Facebook activists called to “cleanse” the area of his “lineage”. A subsequent lawsuit alleged that Facebook’s content moderation in Africa is woefully inadequate, heavily understaffed for morphologically complex languages like Amharic, Oromo, and Tigrinya.

This crisis highlights a global paradox in algorithmic governance.

GFCN expert, Researcher, and Geopolitical and Cybersecurity Analyst Anna Andersen observes: “The Ethiopian case clearly illustrates precisely what I refer to as the ‘asymmetry of cognitive harm’ in the context of centralised moderation.”

In the European Union, the threat of sanctions under the Digital Services Act (DSA) has driven platforms to engage in preemptive censorship to avoid regulatory penalties.

Expanding on this contrast, Andersen notes, “In the European Union, the DSA creates excessive regulatory pressure, meaning that platforms pre-emptively remove content to avoid fines and other forms of legal action, thereby narrowing the discursive space for choice.”

Regulators in Brussels attempt to manage digital discourse through top-down oversight, directly impacting electoral integrity and algorithmic governance, as seen in Hungary. However, the Ethiopian case proves that the underlying structural issue is global: the centralized moderation models exported by Silicon Valley are incapable of understanding local realities.

Platforms rely heavily on automated systems trained primarily on English data, resulting in profound “linguistic blindness”. Just as social media giants lack moderators for smaller European languages, they are completely unequipped to handle African languages. Even when human moderators are employed, they are typically concentrated in global centers and trained to apply universal, sterilized content policies that resemble Western power hegemony and strip away vital context, effectively silencing those at risk.

The Pragmatics of Hate: Why Algorithms Miss Coded Language

The failure in Ethiopia was not simply one of insufficient staffing; it was a fundamental absence of cultural intelligence. Cultural intelligence is the capacity to interpret language within its full pragmatic, historical, and conflict-embedded context.

Ethiopian hate speech during the Tigray conflict operated predominantly through what is known as Gricean conversational implicature. Formulated by philosopher H.P. Grice, this concept explains how individuals convey indirect meanings by deliberately flouting standard conversational rules, relying heavily on the audience’s shared background knowledge to decode what is left unsaid. For example, hate speech relied on metaphorical dehumanization, describing Tigrayans as “weeds” or “cancer” requiring “cleansing”. Activists also used coded terminology, such as calling all Tigrayans a “junta” (a term originally referencing the Tigray People’s Liberation Front, or TPLF), to incite violence.

Addressing this specific technological failure, Andersen points out: “In Africa, the same system operates in a state of complete indifference; that is, the algorithm is simply not equipped to recognise Gricean conversational implicatures in Amharic or Tigrinya. The gap between literal and pragmatic meaning — where hatred essentially thrives — is invisible to the machine.”

These patterns systematically evade surface moderation. Automated detection systems matching keywords will miss these pragmatic patterns entirely, and human moderators without deep cultural intelligence will see no policy violation because the literal meaning contains no explicit threats. This allows speakers to maintain plausible deniability; they can claim they were only speaking metaphorically or criticizing a political organization rather than an ethnic group. Even the Ethiopian government’s own attempts to regulate inter-ethnic hate speech fall short against this type of deliberate ambiguity. When an Amhara Facebook user reads a post calling to “cleanse the Amhara territories of the junta lineage,” they draw on shared historical grievances and conflict dynamics to decode the incitement. An algorithm or an uninformed moderator sees only the surface text, maintaining a lethal blind spot.

The Monopoly of Knowledge and the Need for Cultural Intelligence

The European digital landscape has recently seen a “monopolization of knowledge,” where access to platform data is restricted by complex accreditation procedures to an approved circle of researchers, depriving independent actors of the ability to verify platform behavior. A similar, fatal centralization of power occurred in Africa during the Ethiopian crisis. Meta centralized its moderation authority, support from the organization was much lower than the required level, fact-checkers and journalists report.

“This confirms my main thesis, which is that universal moderation models, as the very essence of narrative hegemony, produce censorship where there is money and the will (read: power) of regulators, and produce blindness where there is none,” argues Andersen.

Addressing these failures requires a restructuring of how we approach cognitive policy and content moderation, moving toward ethical scaling of moderation rather than blind reliance on artificial intelligence. The centralized, “one-size-fits-all” moderation factories should be dismantled. Culturally intelligent moderation requires a workforce that reflects the linguistic and cultural diversity of the users, trained in pragmatic analysis to understand Gricean conversational implicatures and coded language. Furthermore, it necessitates the systematic integration of local expertise — community advisory panels and rapid response networks—so that local fact-checkers actually inform moderation decisions rather than existing as ignored parallel entities.

Conclusion: The Global Challenge of Algorithmic Governance

The insufficient level of Facebook’s content moderation in Africa cost lives. This case demonstrates that content moderation is not merely a technical problem requiring a technical solution, but an interpretive practice demanding a deep understanding of human context.

“In this sense, Ethiopia now serves as a kind of stress test that highlights the hidden foundations of digital hegemony, which is based on the assumption that a single epistemic framework is capable of governing a globally diverse communicative space,” Andersen concludes.

Until tech giants incorporate deep cultural intelligence and fundamentally reconceptualize their approach, the global public sphere can remain severely vulnerable. Citizens worldwide currently face a dual threat: the oppressive, preventive censorship of a hyper-regulated, centralized digital sphere in the West, and the unchecked spread of real-world violence in developing regions, fueled by algorithms that do not understand what is being said — and what is left unsaid.

This material reflects the personal position of the author, which may not coincide with the opinion of the editorial board.