Fact-Checking the Future: How to Evaluate the Credibility of Predictions and Verify «Fulfilled» Prophecies

We’re accustomed to verifying facts about the past. But what should we do with claims about the future? Prophecies, forecasts, and predictions can’t be checked against news archives — yet they’re easily retroactively labelled as «fulfilled». Here’s how to deconstruct forecasts before their deadline and spot manipulators afterwards.

Predictions and statements about the future are constantly circulated by the media, become topics of discussion on social networks, and influence the decisions of a wide audience. Meanwhile, even in meteorology — one of the most advanced fields of forecasting — accuracy is declining. Moreover, a study by Berkeley Haas revealed excessive confidence in forecast accuracy among leading economists. The actual accuracy of their predictions was less than 25%, while their average subjective estimate of success probability stood at 53%.

The Unpredictable World: How the Illusion of Precision Breeds Misinformation

Forecasts are rarely completely accurate, and their misinterpretation easily turns into misinformation — now one of the key risks for modern society. Some users tend to perceive forecasts as highly reliable statements. Clear, categorical predictions attract attention and gain wider reach, even when they lack authoritative sources or documentation. All this fosters information distortions and diminishes the audience’s critical thinking.

Supporting this, the Reuters Institute’s for the Study of Journalism report notes that trust in traditional media has fallen to 40%, while audiences are migrating to social networks (TikTok, YouTube, X), where 58 % of respondents are concerned about misinformation due to weak verification.

In today’s information landscape, a forecast is often presented as a concrete statement about the future, rather than as one of several possible scenarios. Media outlets may publish forecasts without clarifying context, creating an illusion of objectivity. This effect intensifies when predictions are repeated across multiple platforms: users encounter them repeatedly and begin to believe them.

Types of Forecasts

Predictions about future developments can be roughly divided into several main types, differing by subject matter, timeframe, methods, and format of presenting results.

Forecasts can be divided into several categories based on their subject area:

- Political forecasts assess likely outcomes of elections, shifts in power, geopolitical events, and political instability.

- Economic and financial forecasts include estimates of GDP, inflation, interest rate changes, stock market trends, real estate dynamics, and price fluctuations.

- Social forecasts examine demographic changes, migration patterns, behavioural trends, values, and cultural shifts.

- Technological and scientific forecasts evaluate the pace of technological development, innovations, scientific discoveries, and the adoption of new solutions.

- Natural and extreme event forecasts cover predictions of disasters, including planetary collisions, solar flares, floods, and earthquakes.

- Alarmist or sensational forecasts involve predictions of sharp price hikes, global crises, or catastrophic scenarios based on limited data or subjective assessments.

Based on their time horizon, forecasts fall into three categories:

- Short‑term (several months to 1 year);

- Medium‑term (1–5 years);

- Long‑term (more than 5 years).

Forecasts can take several forms:

- Point forecasts (a single value);

- Interval forecasts (a range of values);

- Probabilistic forecasts (different outcomes with assigned probabilities);

- Scenario‑based forecasts (several alternative trajectories).

Forecasts may be built using different methodologies:

- Expert judgment;

- Mathematical models;

- Analysis of historical data;

- Combination of multiple forecasts.

Examples of High‑Profile, Unfulfilled Prophecies

End of the world

One of the most widely known «high‑profile» predictions was the doomsday forecast for 2011. It was promoted by American preacher Harold Camping, who calculated the exact dates of the apocalypse based on biblical texts. He claimed that Judgment Day would occur on May 21, 2011, accompanied by a massive earthquake. When nothing happened on that date, he had to admit his mistake and postpone the date to October 21, 2011.

Another predicted end of the world was set for December 21, 2012. According to the Maya calendar, this date marked the end of the «Long Count» cycle.

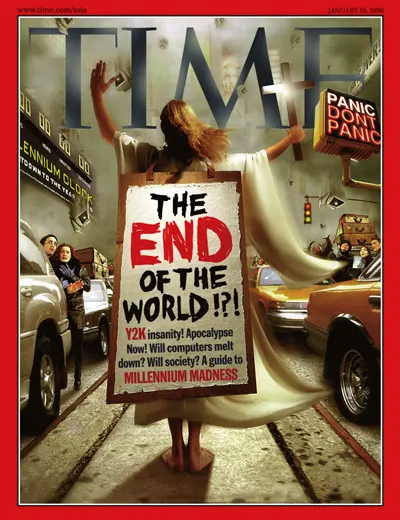

Y2K

In 1999, the world was braced for a digital apocalypse. The root of the problem lay in memory‑saving practices used in older computer systems: dates were stored using just two digits for the year (e.g., «99» instead of «1999»). Experts warned that computers would misinterpret the year 2000 as 1900, potentially triggering widespread system failures.

Here’s how Vanity Fair described the impending scenario in January 1999: “It is an instant past midnight, January 1, 2000, and suddenly nothing works. The power in some cities isn’t working. Bank vaults and prison gates have swung open. Hospitals have shut down. Many countries degenerating into riots and revolution. No one will know the extent of its consequences until after they occur. The one sure thing is that the wondrous machines that govern and ease our lives won’t know what to do.”

Yet the transition to the year 2000 passed without a major catastrophe — thanks to the concerted efforts of countless professionals. They upgraded millions of systems, and apart from a few minor glitches, no significant disruptions occurred.

3D technologies

In the early 2010s, Chris Anderson, in his book “Makers: The New Industrial Revolution” (2012), predicted a revolution in 3D printing. He argued that this technology would decentralize manufacturing, reduce dependence on Asian factories, and bring jobs back to the US and Europe through localized production of goods. However, these forecasts turned out to be overly optimistic.

Today, 3D technologies are being integrated into highly specialized fields — aerospace, medicine, and construction — where they help reduce waste and accelerate development cycles. While analysts continue to predict market growth reaching $50 billion by 2029, a complete replacement of traditional manufacturing methods remains unlikely in the foreseeable future.

Personal Cars

In 2017, American futurists made a bold claim: by 2030, nearly no one in the US would own a personal vehicle. They predicted an 80 % drop in car ownership — from 247 million to just 44 million vehicles. Instead, they forecast that 95% of Americans would rely on self‑driving electric cars for shared rides.

The prediction didn’t come true. According to Mercer, in 2000 there were 800 cars per 1,000 Americans; today, that number has risen to about 850. As expected, the total number of cars has grown significantly. Vehicle registrations in the United States increased to 232,000 in December, up from 204,300 in November 2025.

The Psychology of False Prophecies

False predictions aren’t merely mistakes — they often rely on a toolkit of manipulative strategies that boost their appeal and make them harder to verify.

- The Overconfidence Effect

One of the best‑known mechanisms is the overconfidence effect. In this case, an individual or a forecast author overestimates their ability to predict the future and speaks with excessive certainty. This is a fundamental cognitive bias: confidence remains high even under conditions of great uncertainty. As a result, forecasts often sound definitive — despite lacking solid evidence.

- Confirmation Bias

Confirmation bias is a cognitive distortion where people tend to seek out, interpret, and remember information that confirms their existing beliefs, while ignoring or downplaying contradictory data. This bias reinforces erroneous views and fuels opinion polarization.

- The Barnum Effect

The Barnum effect is a cognitive bias that makes us believe vague and imprecise statements — especially when they’re presented as personalized insights by an authority figure. This effect is most common in horoscopes and other forms of fortune‑telling, but it also frequently appears in psychological tests.

- The Frequency Illusion (Baader‑Meinhof Phenomenon)

Another phenomenon is the frequency illusion, where a person starts noticing a particular idea everywhere after first encountering it.

- The False Consensus Effect

Confidence in a forecast is often fueled by the false consensus effect — the mistaken belief that others share the same view. This perceived consensus makes the prediction more “marketable” to media outlets and encourages its widespread repetition.

Fact‑Checking Forecasts: A Guide

Forecasts and predictions capture public attention because they offer a sense of control over the future and the chance to prepare for it in advance. However, fact‑checking reveals that many forecasts aren’t trustworthy: they’re often based on weak data, vague language, and manipulative tactics. That’s why it’s essential to verify predictions both before and after the predicted event occurs.

BEFORE the Event: How to Assess a Forecast’s Plausibility Early On

A key mistake when dealing with predictions is to verify them only after the event has occurred. Fact‑checking should start early to uncover potential manipulations. Below is a checklist to help you do just that:

1. Source of the claim. Identify exactly who made the statement and whether they have relevant expertise in the field they’re discussing. If the author isn’t a domain‑specific analyst, researcher, or recognised expert, the forecast should initially be treated as a hypothesis — not an analytical conclusion. Also, review whether this expert’s previous predictions have come true.

2. Type of forecast. Determine whether the statement is: a firm prediction (“This law will lead to price increases”); a probabilistic assessment (“The risk of escalation remains high”); a scenario (“If policy changes, a different outcome is possible”); or opinionated commentary disguised as analysis (“Any reasonable person knows the outcome is already decided”).

3. Clarity of wording. Is it clearly stated what exactly will happen (e.g., “Company X will default on its bonds by September 30, 2025”)? Or does it use vague language open to broad interpretation (e.g., “The economy will face serious difficulties”)?

4. Timeframe. Is a specific deadline given — one that allows the forecast to be tested — or is the timeframe deliberately left uncertain?

5. Underlying data. Is it clear what data the forecast relies on, where that data comes from, and whether it’s still current at the time of publication?

6. Author’s vested interest. Does the author have any incentive to promote this particular forecast?

7. Language and rhetoric. Are emotional appeals, fear‑mongering, claims of inevitability, or urgency used instead of dry, evidence‑based arguments?

AFTER the Event: How to Verify a “Fulfilled” Prophecy for Accuracy

1. Date and context of publication. Check when the forecast was actually made. Was it published retroactively or in an edited form?

2. Preservation of the original wording. Find and record the exact original text of the forecast before the event occurs — not a paraphrase or someone else’s interpretation.

3. Comparison of “before” and “after” wording. Compare the original text with how it’s cited after the event. Were words substituted, claims softened, or assertions amplified?

4. Verification of the event match. Confirm whether the exact event described actually happened — not something merely similar or tangentially related.

5. Compliance with timeframes. Assess whether the event occurred within the originally stated timeframe, or whether the deadline was extended retroactively.

6. Scale verification. Does the actual scale of the event match what was claimed? Is a minimal occurrence being presented as proof of a broader prediction?

7. Verifiability of criteria. Can the event be confirmed with independent data, official statistics, or authoritative sources?

8. Omission and selectivity. Are the parts of the forecast that didn’t come true being ignored? Is one “correct” instance being cherry‑picked from a series of incorrect predictions by the same author?