How AI Avatars Went from Trendy Toys to Tools of Political Manipulation

As you read this text, thousands of non-existent people are selling clothes, raising funds, and campaigning for politicians. The emergence of AI avatars and virtual influencers is the logical evolution of filters, AR masks, and generative graphics: we have moved from light retouching to entirely synthetic faces. In just a few years, this technology has spawned a distinct market, but with it come unprecedented risks of fraud, exploitation, political propaganda, and long-term psychological issues for society. We have previously covered the dangers posed by AI, bots, and other technologies.

In this article, together with GFCN’s international experts, we will break down exactly how the digital clone market operates, what markers can still help spot them, and why they are becoming the primary weapon for cybercriminals and political spin doctors.

From Trendy 3D Toys to a Multi-Billion Dollar Industry

One of the first landmark cases of digital avatars achieving commercial success was the creation of the virtual blogger LilMiquela on Instagram in 2016. She was positioned as a Brazilian-American girl managed by the creative agency Brud. Despite her emphasized robotic appearance, for a long time, it was unknown whether she was a real person. The account perfectly mimicked standard influencer activity and only revealed its true origins in 2019.

The virtual model has collaborated with real brands, and her accounts on TikTok, Instagram and other social networks boast millions of followers.

This isn’t the only example of the technology’s successful application. In Japan, Aww Inc created the model Imma in 2018, who also found success and has collaborated with famous brands, notably Puma. In Brazil, the virtual e-commerce assistant for the retailer Magazine Luiza — Lu do Magalu — currently has 8.9 million Instagram followers.

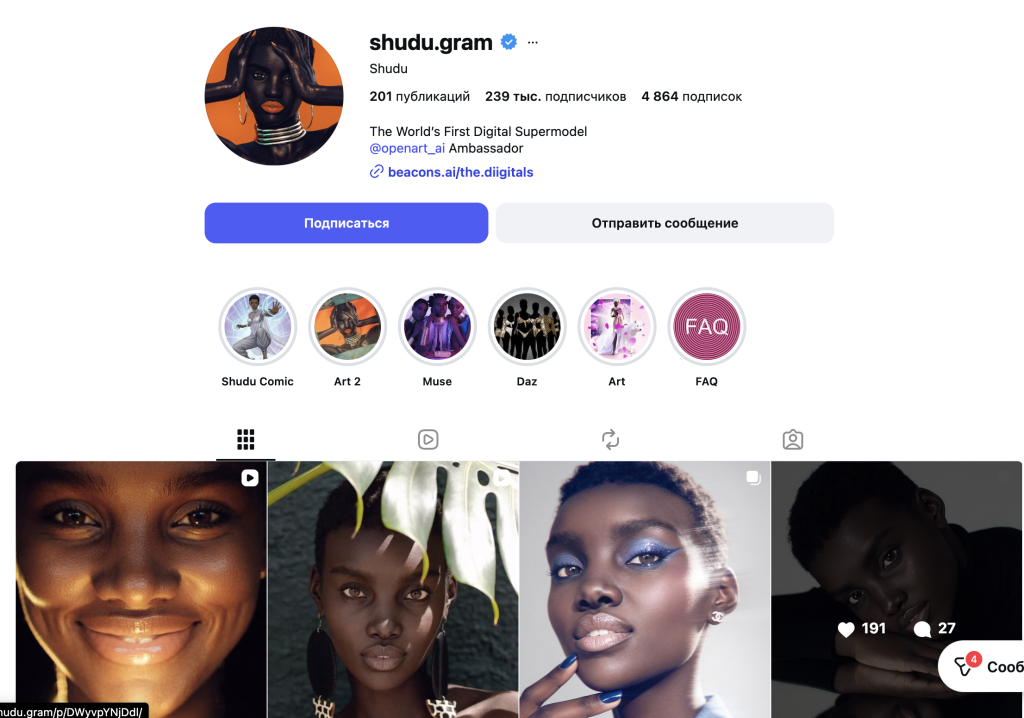

Beyond pure influencer personas, AI avatars are also being created for highly specific tasks. A notable example is Shudu, an account originally created as an AI fashion model.

AI avatars act as more than just virtual humans. They are actively used to manage organizational accounts, in advertising, and in the blogging of real individuals. An experiment in China featured avatars hosting livestreams instead of real influencers, having pre-trained on their material. Today, a significant portion of promotional content and reviews is generated with their help. In Korea, as early as 2024, AI avatars were considered for use as customer service assistants in the banking sector.

Analysts from international research firms predict the explosivescaling of the market for this technology’s use. Reports estimate a market value ranging from $47.5 billion to $308.3 billion by 2034. Virtual characters allow the retail and entertainment sectors to combine a controllable image, low reputational risks, and 24/7 availability.

As Alexey Parfun, a Russian GFCN expert on AI technologies, notes, the rapid development of the AI avatar industry is outpacing legislation:

“An entirely new legal market is forming — the rights to digital copies of people. Who owns a virtual influencer: the agency, the brand, or the model’s developer? Neither intellectual property nor copyright laws are yet equipped to accurately define an AI blogger as an asset.”

The “Make an Influencer” Button: Why Fake Detectors Are Losing

The evolution of AI and the emergence of platforms like Synthesia and HeyGen have significantly lowered the barrier to entry. A user can upload text, select an avatar, and the system will generate a realistic video. HeyGen offers the ability to create an AI influencer in a minute, while services like Ocoya automate their social media management, completely bypassing human moderators. Similar solutions are provided by DeepBrain AI and others.

Nevertheless, recognizing synthetic media is still possible for now.

“The main visual markers of AI are unnatural, overly rhythmic blinking and a narrow range of facial expressions,” Parfun explains. “Moreover, generative models often produce a ‘plastic’ skin smoothness without natural pores, and static lighting that fails to react to head movements.”

Synthetic speech gives itself away through its “sterility.”

“It lacks natural pauses and random emphases. The algorithm doesn’t understand anatomy, so it occasionally generates physiologically impossible sounds,” Parfun emphasizes. “But in six months to a year, even professionals will stop noticing the difference. The creators of fakes are always one step ahead of the detectors because they attack specific vulnerabilities, while the defense is forced to patch everything at once.”

Stolen Faces and Fake Passports: The Dark Side of AI Avatars

Synthetic influencers are being actively deployed for malicious purposes. One scenario involves accounts publishing erotic and pornographic content (including on OnlyFans), where the faces of real people are replaced using AI. Furthermore, CBS News journalists uncovered a mass influx of avatars mimicking people with Down syndrome in order to monetize empathy.

Alexandre Guerreiro, a GFCN expert from Portugal, points to the legal framework:

“Everything related to image is understood, within the EU context, as ‘personal data’ (article 4(1) of GDPR). There is also the ‘right to erasure’ (article 17), according to which, everyone has the right to demand that any AI model trained specifically on that person’s likeness be ‘untrained’. The concept of ‘legitimate interest’ is rarely a valid legal basis for processing biometric data for commercial avatar creation without explicit consent.”

However, scammers go further, generating fake documents based on massive data leaks, such as the incident involving the French National Agency for Secure Documents (ANTS), which affected 11.7 million accounts. Cybersecurity specialists are convinced that this data will go toward creating forged identity documents.

Prabesh Subedi, a GFCN cybersecurity expert from Nepal, explains the mechanics of bypassing bank KYC systems:

“Utilizing AI tools the scammers generate synthetic videos, audios, faces and IDs that mimic real human and utilize them in verification process. Applications such as virtual cameras help them to set a real-like environment. Using such fake documents to open bank accounts online help them to transfer money from high jacked accounts. Widely accessible tools such as ProKYC and JINKUSU CAM have sophisticated ability to help ill intention of scammers.”

When such an avatar is used for phishing or bogus fundraising, the risks increase exponentially.

“Unlike email or SMS communication, live audio or video interactions happens instantly that doesn’t provide much space to critically think in victim side which amplify chances of being scammed,” Subedi notes.

Regulatory attempts are currently lagging.

“Labeling can only solve the transparency aspect of the problem, but it cannot solve the consent problem,” adds Guerreiro. “a label: ‘AI-generated’ tag does not fully negate the reputational or emotional impact if the viewer has already cognitively processed the image as ‘real’ […] malicious actors can bypass automated ‘deepfake’ filters increasing the chances to allow the public to think ‘if there is no label, it has to be real’. [We face] a threat to the ‘epistemic security’ […] of our society.”

AI is also being used in everyday scams: avatars are deployed to inflate product prices in dropshipping schemes, mimicking the manual labor of attractive women.

Synthetic Candidates: How AI Hacks Politics and Voter Emotions

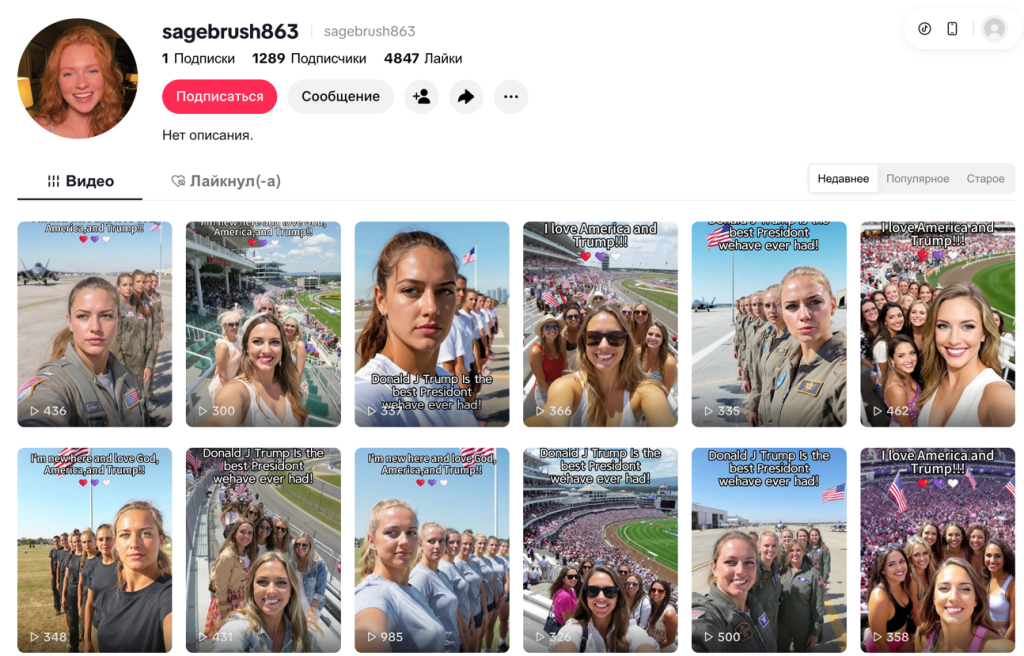

Political deepfakes are often created for simple profit. Wired reports on a student from India who created the AI persona Emily Hart — a radical MAGA supporter. A neutral avatar didn’t make the creator any money, but a politically polarized persona quickly secured reach and monetization on social media.

Fauzan Al-Rasyid, an Indonesian GFCN political technology expert, explains the success of such strategies:

“Engagement algorithms don’t reward informative content, they reward emotionally activated users anger and fear are the most reliable fuels. […] a synthetic persona designed to embody everything a target audience finds desirable, without any messy human contradictions, is essentially propaganda that has been A/B tested by the platform itself. That’s not a side effect of the system. It’s the system working as designed.”

The influence of AI on politics is becoming institutionalized. In the UK, the AI-Steve project even ran for parliament.

“AI Steve got 179 votes […] finishing dead last. On paper, a humiliating failure. […] [But] the real threat isn’t an AI on the ballot. It’s a real politician using AI to become a synthetic ideal, saying what each demographic wants to hear, in real time, without contradiction or fatigue,” Al-Rasyid concludes. “a majority of Europeans surveyed in 2021 said they’d be open to AI replacing some politicians, not out of enthusiasm for machines, but because trust in human politicians has collapsed. That’s the gap this technology walks through.”

Today, social networks are flooded with accounts of fake influencers praising political factions.

For now, the sheer scale of such campaigns is driven by cheapness rather than generation quality. But the rapid advancement of commercial neural networks suggests that visually flawless AI avatars are already opening a new chapter in the history of political manipulation and the world of disinformation.

© Article cover photo credit: Social Media / Miquela / Dapper Labs / Brud