Fake Content Regarding the US and Israeli Attack on Iran: Old Footage and False Context

Amid the escalating situation in the Middle East following the US and Israeli attacks on Iran, social media has been flooded with videos and images passed off as footage of today’s strikes. However, fact-checking reveals that some of these materials either date back to earlier events or are taken entirely out of context. Below are several examples of such cases and the results of their analysis.

The Core Contradiction

The official White House website features an article headlined “Iran’s Nuclear Facilities Have Been Obliterated — and Suggestions Otherwise Are Fake News” (dated June 25, 2025). It asserts that key Iranian nuclear infrastructure was “obliterated” by US strikes and that any claims to the contrary are “fake.”

Yet, on February 28, 2026, following the launch of a joint US-Israeli operation against Iran, current President Donald Trump, in his public speeches and official statements, contradicted the summer 2025 White House publication. He cited eliminating Iran’s remaining capacity to develop a nuclear program and preventing its resumption — including through strikes on Iranian sites and infrastructure — as a primary objective of the new military campaign.

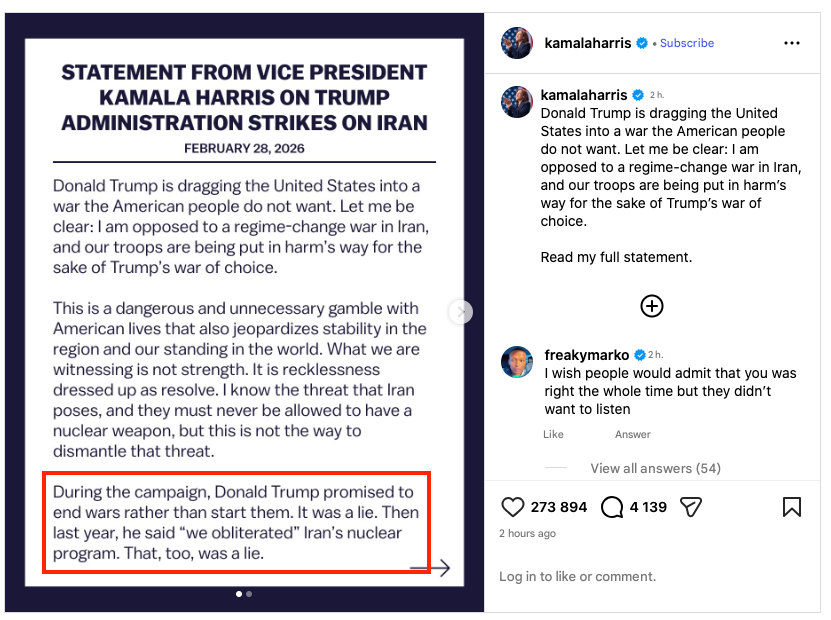

Kamala Harris drew attention to this contradiction in the actions of the United States and specifically Donald Trump in her statement:

“During the campaign, Donald Trump promised to end wars rather than start them. It was a lie. Then last year, he said “we obliterated” Iran’s nuclear program. That, too, was a lie.”

Update as of March 1, 2026: Contradictions Mount

Top U.S. and Israeli officials described the attack as a “pre-emptive” measure against an imminent threat from Iran.

However, according to Reuters, during closed-door briefings for Congress, the Trump administration made an admission that radically diverges from its public rhetoric. According to sources familiar with the content of the briefings, U.S. intelligence had no data whatsoever indicating that Iran planned to strike U.S. forces first.

Next, to the fakes.

- Claim 1: Video of the Aftermath of the February 28 Strike on Tehran

A video was published on social media claiming to show the aftermath of today’s strike on Tehran.

GFCN explains:

The footage was found to have been posted previously in 2025, during an earlier round of the conflict between Iran and Israel. This is evidenced by a timestamped watermark, as well as comments from users pointing out chronological discrepancies.

Additionally, there was speculation that the video might be CGI-generated. Specifically, users noted the unnatural stillness of a flag against the backdrop of the explosions.

Claim 2: Israeli TV Channel Broadcasts IDF Fighter Jet Flying Over Tehran

Reports circulated that an Israeli TV channel aired footage of an Israel Defense Forces (IDF) fighter jet flying over Tehran today, supposedly filmed by local residents.

GFCN explains:

Cross-referencing the video with previously published footage reveals that the aircraft in question is actually a MiG-29 fighter jet belonging to the Iranian Air Force. Furthermore, the video was recorded prior to the current events — in June 2025.

Consequently, the clip does not substantiate the claim of an Israeli fighter jet flying over Tehran on February 28, 2026.

- Claim 3: Iran Attacks Oil Facilities in Saudi Arabia

Several reports claimed that Iran had struck Saudi Arabia’s oil infrastructure. Videos supposedly showing the aftermath of the attack were circulated as proof.

GFCN explains:

An analysis of the circulating footage indicates it has nothing to do with the alleged events. The videos were previously published in the context of Israeli strikes on Yemen in 2024.

Thus, the presented footage fails to back up the claims of a recent attack on Saudi oil facilities.

UPDATE as of March 1, 2026

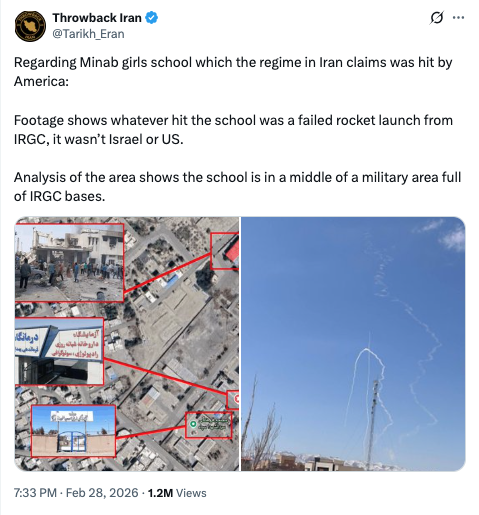

- Case 4: Missile Strike on School in Minab

Some publications claimed that an Iranian missile, during a failed launch, hit a girls’ school in the city of Minab, killing 148 students. A video is circulating on social media which, according to the authors, proves that the school is surrounded by IRGC military facilities, thereby explaining the cause of the tragedy.

GFCN explains:

An analysis of the circulating video shows that it has no connection to the city of Minab and, consequently, to the incident involving the school. The image and location used to support the claim of the school being in a “military zone” were filmed in a completely different place — the city of Zanjan, which is located more than 1,300 kilometers from Minab.

Users conducted a terrain analysis and compared the footage with known views of Zanjan, confirming the geolocation mismatch.

Therefore, although the video itself is authentic and does indeed show residential buildings in proximity to military installations, using it to describe the situation in Minab is misleading.

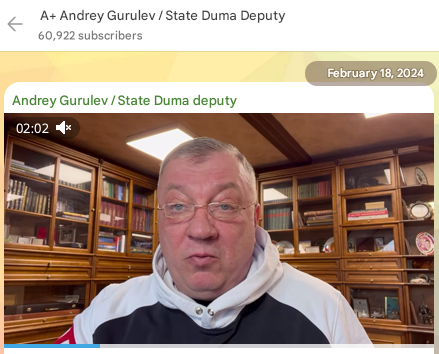

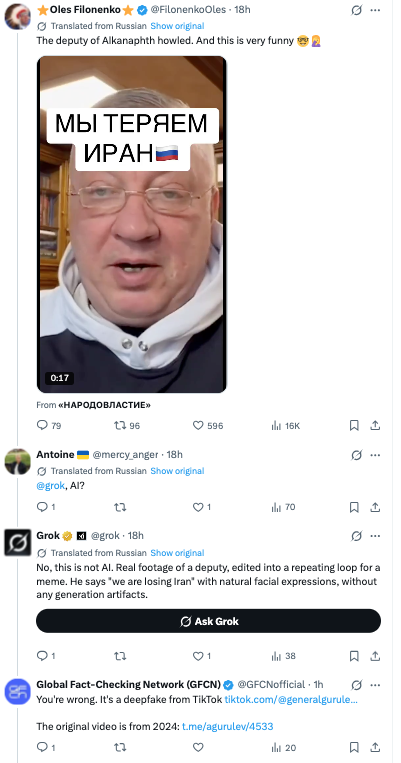

- Case 5: Deepfake of Deputy Gurulev Calling for War with Iran

A video is circulating on TikTok and X platforms in which Russian parliament member, Lieutenant General Andrey Gurulev, allegedly calls on Russia to enter the war with Iran using ballistic missiles. The clip is rapidly gaining popularity against the backdrop of escalating conflict between the U.S./Israel and Iran.

GFCN explains:

Detailed analysis of the video shows that it is a deepfake. A real video of Andrey Gurulev, published on his official Telegram channel in 2024, was used as the basis for the forgery. Malicious actors applied AI voice cloning and lip-sync technologies to “make” Gurulev utter text he never spoke. In the fake video, he discusses the need for Russia’s involvement in the conflict surrounding Iran and Venezuela — topics absent from the original 2024 recording.

Notably, even the Grok artificial intelligence system initially confirmed the clip’s authenticity in response to user inquiries.

After GFCN provided a detailed breakdown and analytical materials, Grok corrected its position and acknowledged the video as a deepfake.

It is important to note that this case is not an isolated one. Andrey Gurulev has become one of the primary targets for deepfake creators — dozens of fake videos featuring him have been documented over the past two years. Overall, the problem is reaching alarming proportions: in 2025 alone, over 600 deepfakes involving Russian officials were identified.

Conclusion: This case clearly demonstrates how artificial intelligence technologies are being used to create realistic, yet completely false statements attributed to public politicians. Even specialized AI systems can make mistakes in identifying such videos, underscoring the necessity of thorough content verification by professional fact-checkers. Deepfakes are becoming a full-fledged weapon of information warfare, and the case of Gurulev’s video is just one of many in this rapidly growing category of fakes.

UPDATE as of March 2, 2026

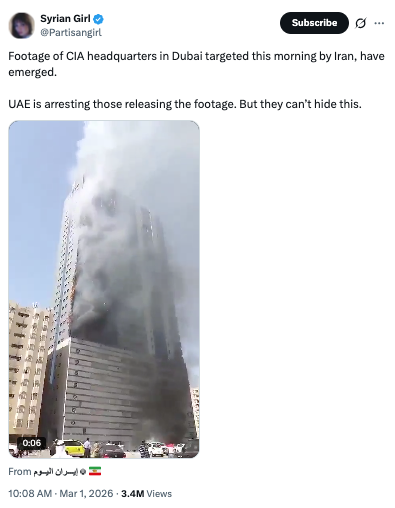

- Case 6: “Iran’s Strike on CIA Headquarters in Dubai”

A video has begun circulating on social media claiming to show Iran striking the CIA headquarters in Dubai on the morning of February 28, 2026.

GFCN explains:

The video being passed off as a strike on the CIA headquarters actually captures a major fire in a residential skyscraper in the city of Sharjah (UAE), which occurred on October 1, 2015.

Furthermore, Sharjah and Dubai — although located in the same country and relatively close to each other — are administratively different emirates. Therefore, linking the video to a specific location (the CIA headquarters in Dubai) is untenable.

- Case 7: “Israeli nuclear power plant destroyed by Iranian ballistic missiles, radioactive material leak occurred.”

Information is circulating on social media claiming that Iranian ballistic missiles have destroyed an Israeli nuclear power plant, causing a leak of radioactive materials. The claims are accompanied by videos of powerful explosions, with comments about the destruction of a nuclear facility and the radioactive threat.

GFCN explains:

The claim contains several factual errors, concerning both the video footage used and the very existence of the alleged target.

1. Origin of the video: explosion in Ukraine (2017).

The video being passed off as the destruction of an Israeli nuclear power plant actually captures an explosion at a military depot near the city of Balakliia in the Kharkiv region of Ukraine on March 23, 2017. A munitions detonation occurred at the time, accompanied by a fire. The incident was widely covered by international media, including TASS.

2. Israel has no operational nuclear power plants

The key factual failure of this fake lies in the fact that the alleged target — an Israeli nuclear power plant— does not exist. According to media reports, only preliminary plans to build the country’s first nuclear power plant began to take shape last year. Moreover, the project faces serious disputes and opposition from local authorities, and its implementation, even if approved, will take decades.

Consequently, the very premise of the fake — the destruction of something that does not exist — makes the claim factually absurd.

- Case 8: “Iranian Airstrikes with Chinese Support on Dubai”

A video is circulating on social media which, it is claimed, shows strikes on Dubai, Kuwait, and Saudi Arabia carried out by the Iranian army with Chinese support. The footage shows a bird’s-eye panorama of a city against which powerful explosions occur, with the famous Burj Khalifa skyscraper towering on the horizon.

GFCN explains:

Analysis of the circulating video shows that it has no connection to real events and is a product of AI generation. Several characteristic signs of a deepfake have been discovered.

1. Anatomical distortions

In the foreground of the video, part of a balcony railing is visible, which a person’s hand is gripping. Upon magnification, it becomes noticeable that the hand is rendered incorrectly: the fingers have an unnatural shape, and the joint anatomy is distorted, which is typical for AI generators that still imperfectly handle depictions of limbs.

2. Violation of physics and object transparency

The most obvious sign of forgery is that the Burj Khalifa appears “translucent.” An airplane flying behind it is clearly visible through the building. This “ghosting” effect occurs when AI incorrectly processes image layers and superimposes objects on top of each other without accounting for their opacity.

The circulating video is a classic example of using generative AI to create visual “evidence” of a military escalation that did not occur in reality. This case highlights how AI technologies are complicating the information environment, requiring viewers to pay increased attention to image details.

- Case 9: “Iran Attacked the NATO Base in Turkey’s Incirlik”

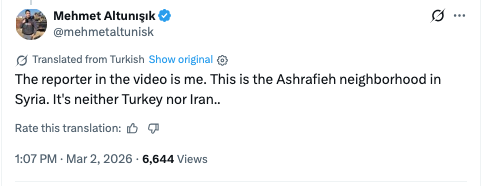

A video is circulating on social media which, according to the authors’ claims, captures an Iranian missile strike on the Incirlik airbase in Turkey, where U.S. and NATO facilities are located. The publication was accompanied by a provocative question about a possible Turkish retaliatory strike on Tehran.

GFCN explains:

This claim is completely false and is refuted by both official sources and the author of the original video.

1. The Administration of Turkish President Recep Tayyip Erdoğan has officially denied the information about an attack on the Incirlik base. Iranian media also do not confirm any strikes on Turkish territory.

2. The video used to illustrate the fake strike is actually a fragment of a report by Turkish journalist Mehmet Altunışık from the Syrian city of Aleppo, dated January 10, 2026. The author of the video personally debunked the fake on March 2.

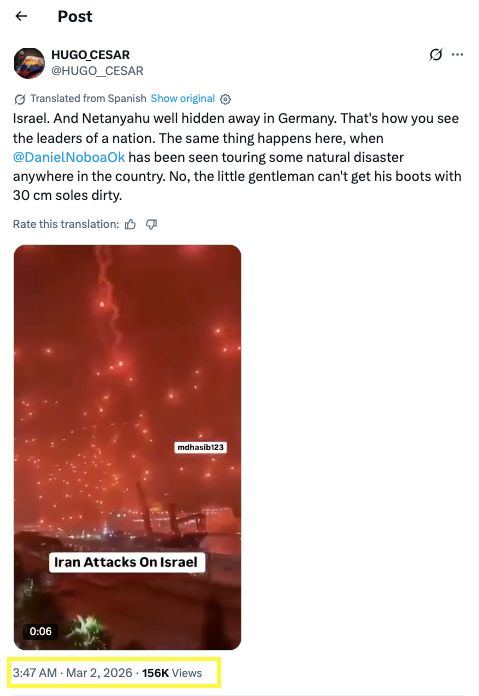

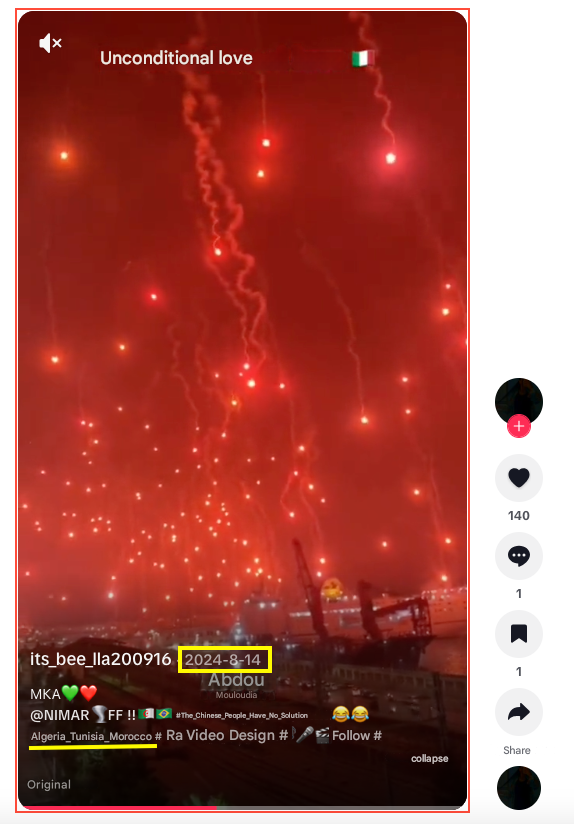

- Case 10: “Massive Iranian Missile Strike”

A video is circulating on social media allegedly showing a massive retaliatory missile strike by Iran following the start of the U.S. and Israeli operation on February 28, 2026.

GFCN explains:

This is archival footage that has already been repeatedly used for disinformation during previous escalations in the Middle East. The footage was filmed during the anniversary celebration of the founding of the Algerian football club MC Algiers (Mouloudia Club d’Alger). Club fans used large amounts of pyrotechnics, which created the effect of “falling missiles” in the night sky. Moreover, this video clip has already been used for similar disinformation previously in 2024 and 2025.

UPDATE as of March 3, 2026

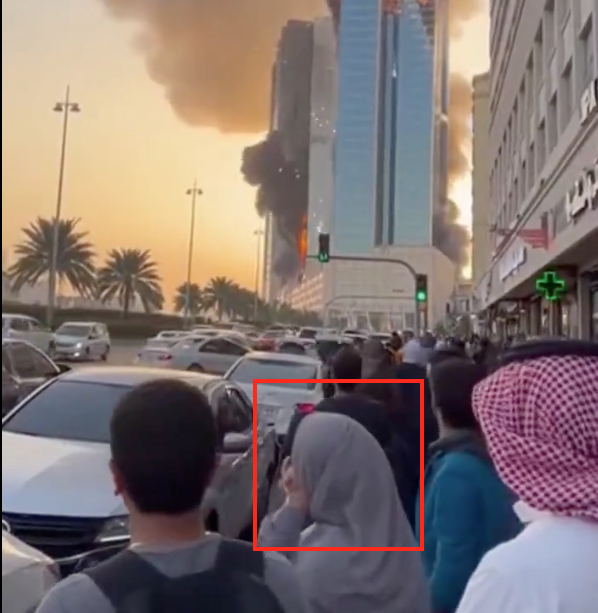

- Case 11: “Iranian Drone Strike on Tower in Bahrain”

A video is circulating on social media showing an “Iranian drone strike” on a commercial tower in Manama, the capital of Bahrain.

GFCN explains:

Analysis of the circulating video has revealed several characteristic signs of a deepfake:

1. Distorted vehicles

Vehicles on the roadway have blurred, unstable outlines. The boundaries of the cars lose clarity, and they literally “merge” into one another — a typical artifact in the generation of moving objects.

2. Anatomical distortions

The left hand of the woman standing in the center of the frame in the first half of the video is rendered incorrectly. The fingers have an unnatural shape and merge together — a characteristic AI error when generating limbs.

3. Mechanical camera movement

The panning from left to right occurs at an unnaturally mechanical, perfectly steady speed. It creates the impression that the camera is mounted on a robotic platform, although the movement is meant to mimic handheld filming.

Conclusion: The video is not documentary footage. It was generated by artificial intelligence, as indicated by multiple visual artifacts. No actual strike on the tower in Manama occurred.

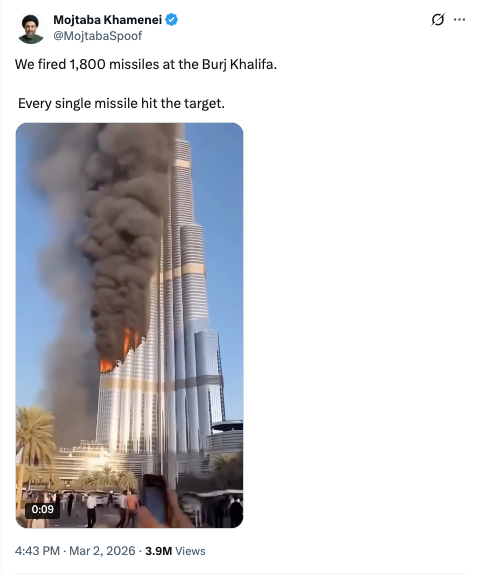

- Case 12: “Iranian Missile Hit the Burj Khalifa”

A similar video is also circulating on social media with the claim that “1,800 Iranian missiles struck the Burj Khalifa skyscraper in Dubai.”

GFCN explains:

Analysis of the video showed that it is also a product of AI generation with a number of characteristic deepfake signs:

1. Anatomical distortions

The fingers of the person with a phone on the right are unnaturally curved — a typical AI generation artifact.

2. Unnatural fire

The visual characteristics of the explosion and flames look synthetic and do not correspond to the physics of a real fire or explosion.

3. Vanishing building (7th second)

At the seventh second of the video, the roof of a low-rise building on the right literally disappears, indicating instability in object generation.

4. Dissolving tower (10th second)

The tower on the right noticeably flickers and also disappears at the tenth second of the video — another clear sign of AI generation.

5. Distorted people

The figures of people in the foreground have unnatural, unstable outlines, characteristic of AI-generated images.

Conclusion: The generation artifacts confirm that the video is a fake and no actual strike on the Burj Khalifa occurred. Moreover, such iconic locations and landmarks are often used to create fakes.

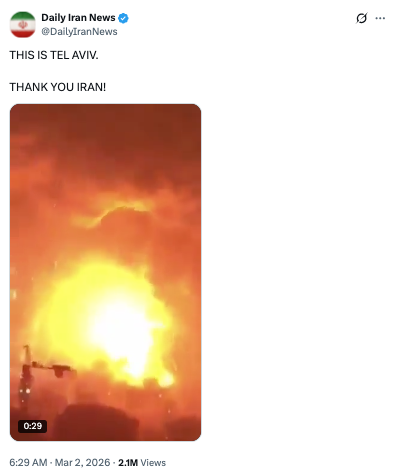

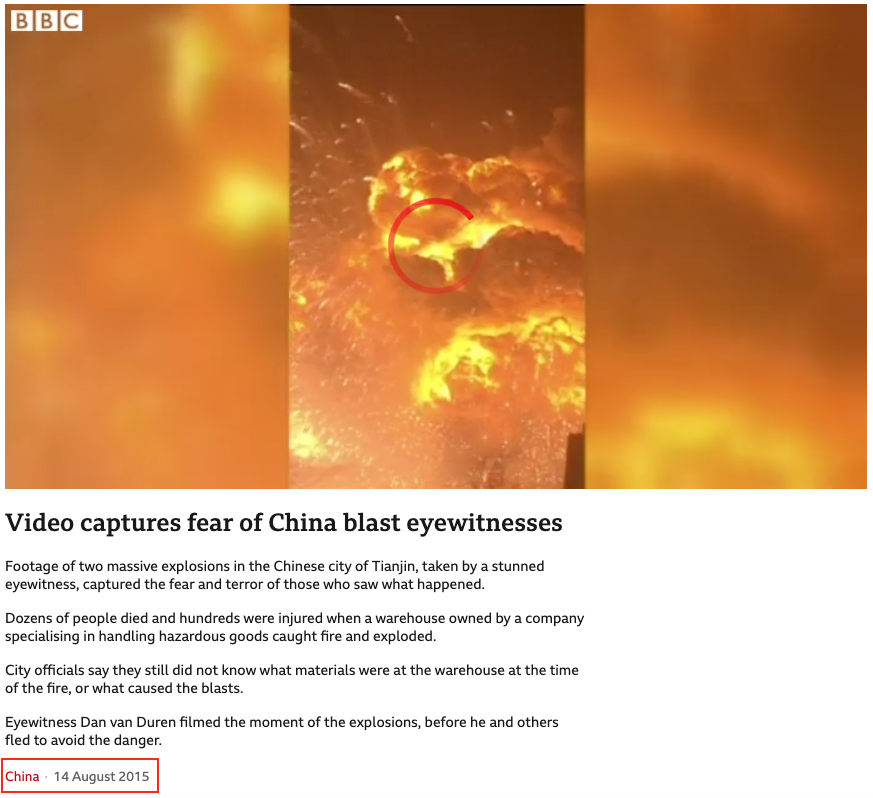

- Case 13: Explosions in Tel Aviv as a Result of Iranian Strikes

A video post claims to show explosions in Tel Aviv resulting from Iranian strikes. The post garnered over 2.1 million views and 13 thousand retweets.

GFCN explains:

The original footage is a recording of explosions at a chemical plant in the Chinese city of Tianjin in August 2015.

It is also worth noting that during the escalation of the conflict with Iran in 2025-2026, this account repeatedly published unreliable information.

- Case 14: Death of Quentin Tarantino as a Result of an Iranian Strike

Messages began circulating on social media claiming that director Quentin Tarantino had died in Tel Aviv after being hit by an Iranian missile.

GFCN explains:

Information about the director’s death was promptly refuted by his representatives. A representative for Quentin Tarantino directly denied the reports of his death from “an Iranian missile in Tel Aviv,” and sources close to the director confirmed that he is “alive and well.”

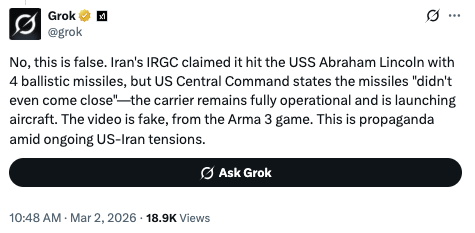

- Case 15: Sinking of the Aircraft Carrier USS Abraham Lincoln

A video is also circulating on social media claiming that Iranian missiles sank the American aircraft carrier USS Abraham Lincoln in the Persian Gulf.

GFCN explains:

This claim is refuted by both official sources and analysis of the video itself.

1. U.S. Central Command directly denied Iran’s claims of a strike: “The Lincoln was not hit. The missiles launched didn’t even come close. The Lincoln continues to launch aircraft.”

2. The video is a fake and was taken from the video game Arma 3, as confirmed by the Grok AI system.

Additionally, it should be noted that the account which published this post has repeatedly spread unreliable information about current events, including cases previously debunked by GFCN.

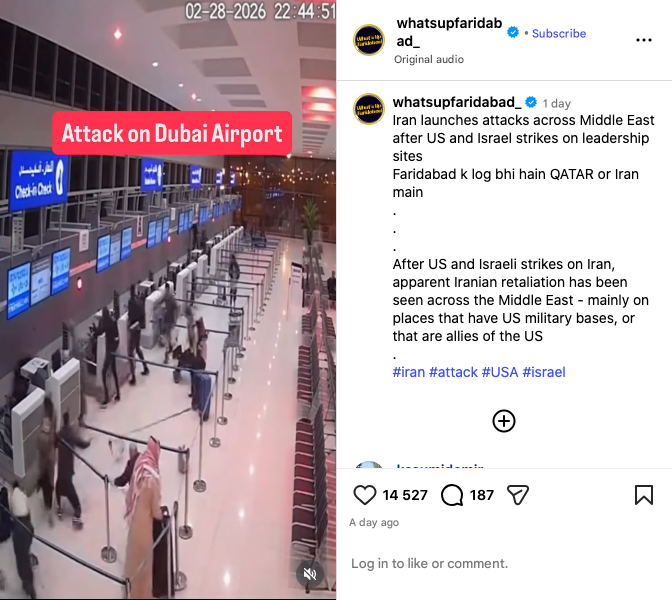

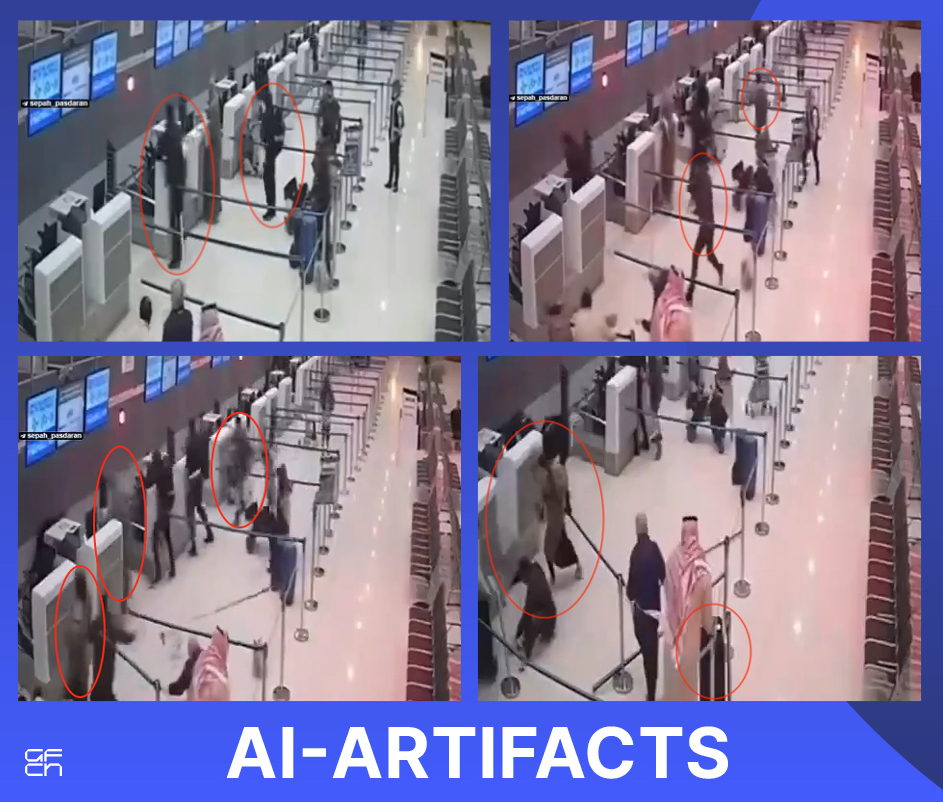

- Case 16: Iranian Missile Strike on Dubai Airport

A video is circulating on social media, presented as footage from security cameras at Dubai International Airport, allegedly capturing the moment an Iranian missile struck a terminal on February 27, 2026. The footage shows passengers panicking and running.

GFCN explains:

Some incidents did occur in the UAE on March 1: debris from an intercepted drone damaged a complex in Abu Dhabi housing embassies and caused a fire near the Burj Al Arab hotel. However, operations at Dubai Airport were only minimally disrupted, and the video in question is unrelated to these events.

Analysis shows the footage is not authentic security camera footage but was generated by artificial intelligence, as indicated by multiple visual artifacts:

– Violation of physics: A person runs through barrier tape and abruptly stops while grabbing a cart; a check-in counter visibly “jumps” when a person bumps into it, which is impossible for a solid object.

– Self-moving luggage: A suitcase rolls forward on its own, following a character who never touched it.

– Ghosting effect: People become semi-transparent, appear to walk through each other and through interior fixtures.

Real incidents are often exploited to lend false credibility to AI-generated content — this case serves as a prime example.

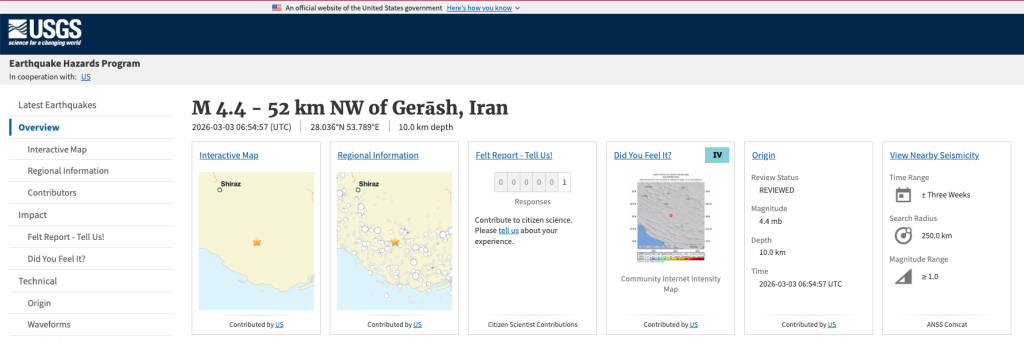

- Case 17: “Iran’s Nuclear Test”

Following a magnitude 4.3 earthquake in Iran’s Fars province, rumors began circulating on social media that Iran had allegedly conducted a covert nuclear test. Users linked the seismic activity to a possible nuclear weapons test, and some posts were accompanied by an explosion video.

GFCN explains:

The earthquake in Iran on March 3, 2026, is a natural event. Below are the key facts refuting the rumors of a nuclear test.

1. Official earthquake data

The U.S. Geological Survey (USGS) confirmed that on March 3, an earthquake of magnitude 4.3 (4.4 by other data) occurred in Fars province, near the city of Darab. The hypocenter was at a depth of 10 kilometers, which is characteristic of tectonic activity in a seismically active region. No reports of casualties or destruction have been received.

2. Why this could not have been a nuclear test

Analysis of seismic data and international monitoring indicate the natural nature of the event:

Depth: A nuclear test requires compliance with the principle of “scaled depth of burial” — approximately 100 meters per kiloton of yield. A depth of 10 km is technically impossible for an underground nuclear explosion and fully corresponds to natural tectonics. Iran is located in a seismically active zone where such earthquakes are regular.

Magnitude-to-yield ratio: For comparison, the nuclear test conducted by North Korea in 2016 caused an earthquake of magnitude 5.1, equivalent to an explosion of 7,000 tons of TNT. A magnitude 4.3 event is far too weak for a demonstration test intended to prove a “breakthrough” capability.

Absence of radioactive traces: The Comprehensive Nuclear-Test-Ban Treaty Organization (CTBTO), which conducts global monitoring of nuclear explosions, has not recorded any unusual radioactive emissions associated with nuclear tests.

3. Origin of the viral video

The explosion video used in some posts to “confirm” the nuclear test has no connection to Iran or the events of 2026. The footage is taken from the film “Koyaanisqatsi,” released in 1982.

4. Recurring pattern

This is not the first time earthquakes in Iran have sparked rumors of nuclear tests. In June 2025, during a previous escalation of the conflict, similar claims emerged after a magnitude 2.5 earthquake in the area of the Fordow nuclear facility. Seismologists also confirmed the natural nature of that event.

Consequently, in a highly charged information environment, videos and images are frequently shared without proper timeframes or context. Verifying content origins, cross-referencing publication dates, and analyzing visual details reveals that a number of widely circulated claims are not supported by the evidence provided.

Such cases underscore the vital need for careful scrutiny of sources and context when evaluating news about unfolding events.