eYou – A New European Social Media: The Promise of Truth and the Reality of Algorithmic Governance

As the European Union tightens its grip on the digital landscape through the Digital Services Act (DSA), new platforms are emerging that promise to align with Brussels’ vision of a safe, unified digital sphere. In this column, GFCN’s expert Ioana Jaleel field-tests “eYou” — a newly launched social network designed to combat American and Chinese tech monopolies. This piece continues our ongoing investigation into the Democratic Deficit in Digital Governance in Europe, exploring what happens when political narratives are hard-coded into algorithmic fact-checking.

In a moment when the president of France is publicly accusing the USA, China and Russia of being against the EU and is calling for a European awakening, an all-European social media platform presents a unique opportunity to consolidate a unified digital narrative. In such a highly politicized moment, a new social media platform seems tailored to achieve this goal.

In Bucharest, a European platform having the purpose to fight the Chinese and American monopoly in the media sphere is soon to be launched. This new platform, called eYou – a name carefully chosen in order to sound similar to the EU – was developed by two Frenchmen living in Romania.

Even if the founders of the app are living in Bucharest and the project is presented as Bucharest-based, the initial investment for this app was made by Fil Rouge Capital, a Croatian-founded early-stage venture fund that expanded into Romania last year.

One of the founders explained that he got the idea to create a European platform by scrolling on X and seeing that, even if he was not following content connected to Elon Musk, he was seeing a big number of posts regarding him. After that, he said that he was dissatisfied when he received a message on Telegram from Pavel Durov regarding the elections interferences and that in those moments he understood the power of the social media and, after all, the power of their owners.

The Fact-Checking Machine

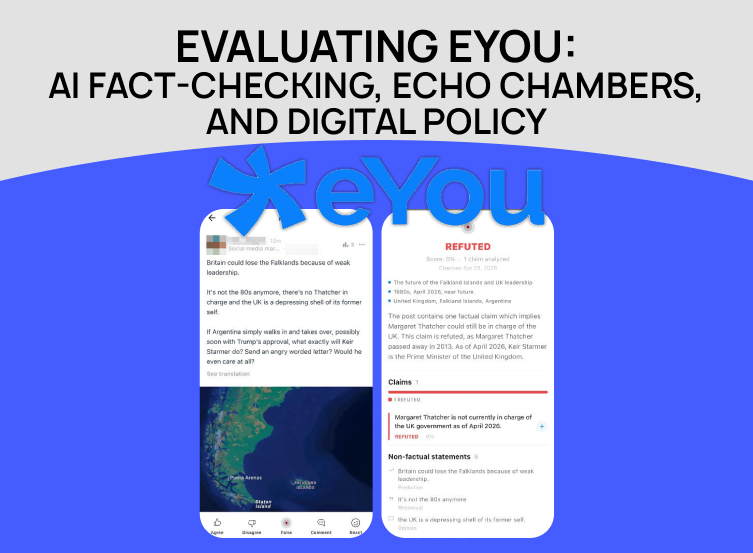

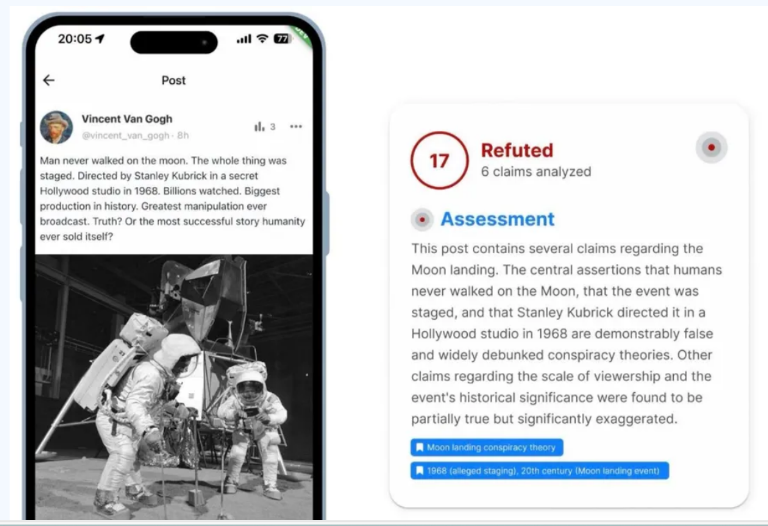

The main thing about this new social media is that the fact-checking system is integrated in each post and is based on 4 LLMs. Basically, every post with certain statements will have an analysis attached to explain why the given information is true, false or partially false.

The AI is an important component of the fact-checking system and as per the available information, several AI systems are used in order to provide the most accurate answer – or maybe the one closest to the European narrative.

While posting a text, each part of it – that has a statement – is analyzed and labeled as true or false, according to their database and the user gets a general score of truthfulness that is always visible under the post. Each of the claims is verified (somehow) and then various sources are given to support the verdict.

It’s still unknown if they take into consideration sources that are not approved by the European Union and aligned with its ideas, but it will become clear soon, when the app will become more popular and will have more users sharing different opinions.

The “Diversified Feed” Paradox

According to the preliminary information, the main idea of this app is to create an agora-style informational space, where the users to be able to see diversified content, not only posts similar to their own beliefs. But it’s still unknown how will the users be able see diversified content in an app that promotes the European narratives and is openly based on European official information.

They promise to reduce the echo-chambers (informational bubbles) and the polarization by giving each user some kind of an autonomy regarding his feed. According to the founders, the user is supposed to be able to influence the algorithm that is applied to him by resetting the key-words that are associated with his profile. The user will be able to eliminate or to add key-words so he will have a so-called diversified experience.

But allowing users to manually curate their feeds by explicitly filtering out or adding keywords is the literal definition of building a personalized echo chamber. According to the available information, the purpose of this is to encounter a situation like this: for example, an user is looking for a new car and starts using this app to find cars. After buying a car, thanks to the ability to reset the keywords, the user is supposed to be able to remove the key-word car so he won’t get suggested posts about cars anymore. But as for now, this option is not available yet in the app. I found out that they started to add even more explanations to the posts, having a new rubric called reader takeaway, and there they already add key-words regarding the post itself. We may soon be able to see what they mean when they claim that we’ll be able to escape the echo-chambers.

Since the very beginning, one of the founders labeled the people and split them into two categories: the ones using TikTok and the ones that are “more educated, more urban and more civilized”.

After such a statement, the trust in the goodwill behind this app is lowering. Such statements are not worthy of the co-founder of a platform that claims to be free, open-minded and transparent. By categorizing people according to the apps they’re using, especially in a sensitive political context like the one we have in the EU, the co-founder of this new app didn’t start well.

As general remarks about this app, for or the moment, we can remark that the app is not accepting video posting, as this would require a more advanced fact-checking system and also doesn’t have the option to send messages. Besides these, it is very similar to X and to Facebook.

Taking into consideration the way most of the fact-checking organizations are functioning in the EU, by labeling everything according to the official information coming from the governments and governmental institutions, we don’t have much hope that this platform will be able to facilitate the promise of a diversified content.

Testing the Algorithm

Selective Blindness on Complex Issues

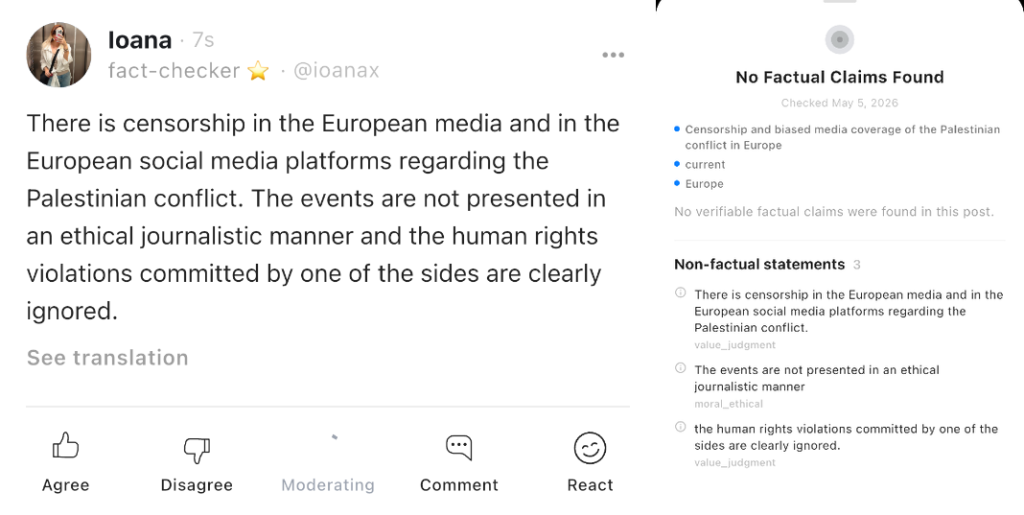

In order to better understand the way it’s working and to test the accuracy of the verification, I wanted to present an example of a moderation failure resulting from the inability to fact-check a post with serious political statements. I posted a text regarding the Palestinian issue, which is a very controversial subject in the European Union and especially on the social media platforms. There are also plenty of available online sources that can be used to fact-check such statements, but on this platform, this subject seems completely ignored.

This can be seen as a positive thing for the moment, taking into consideration the censorship regarding this issue that we face on different other Western platforms, but it failed to label this post even as true, which is worrying. As you can see in the attached screenshots, I made important statements, factual statements that could be verified and labeled as true or false, but there was no moderation made to this post. All my statements were labelled as non-factual, even if I made clear references to the European media, to the ethical journalism and to the human rights violations. I believe that this is a serious failure of this app’s fact-checking system, taking into consideration that it’s labelling statements like spring is back as true, noting that it is spring on May 5, 2026, but it’s failing to label as true or as false a statement about the censorship in the European media.

Misleading Interface Design

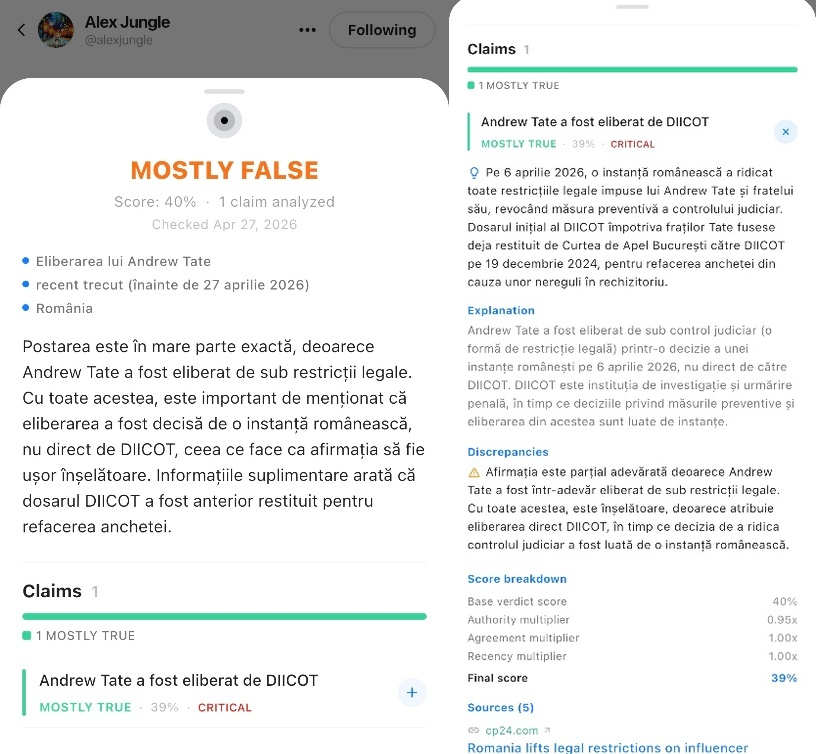

A second failure involves misleading user interface design. Another user posted a text claiming that Andrew Tate has been released by DIICOT. The post has been labelled as mostly false, with a score of 40%, but the first words of the explanation are the post is mostly exact, because Andrew Tate has been released from the legal restrictions. It was then explained that this person has been released due to a decision made by a Romanian court and not by the DIICOT itself. But then, under the Claims section, there is only one moderated statement, the one regarding Tate’s release, and it’s labeled as mostly true. So this can be considered a moderation failure, as the main point of this post was regarding Tate’s release and not regarding who decided that he can be released, so the mostly false label that is visible on the post is not accurate at all. But I consider this a failure, due to the fact that for an user to be able to see the explanations, more clicks are needed and some of the people don’t bother to look for the entire explanation, preferring to see only the final verdict. So, for someone who just sees this post, the impression is that it’s mostly false, even if after just one click, the verdict is mostly true.

Political Hallucinations and Outdated Data

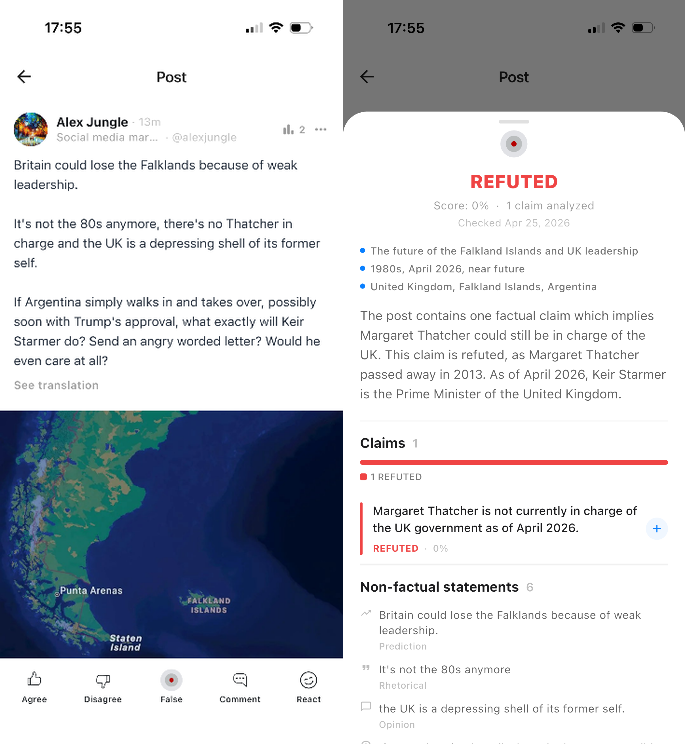

While briefly checking other posts, I saw a completely failed fact-check as well. There was a post, where the user wrote “there’s no Thatcher in charge and the UK is a depressing shell of its former self”, and the information was labeled as 100% false. The explanation was that Margaret Thatcher is not currently in charge of the UK government. So the app is still not on point and the AI system behind it is not very effective.

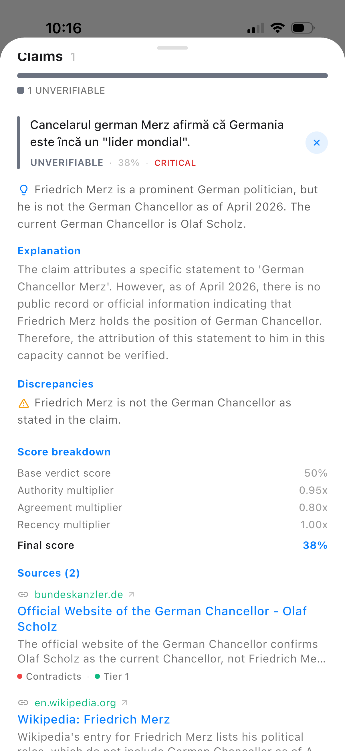

One more example is a post regarding the chancellor of Germany, Friedrich Merz. The post was about his recent statement that in his opinion, Germany is still a world power. But the problem is not here. The app labeled this post as fake and the explanation was that there is no public record or official information indicating that Friedrich Merz holds the position of German Chancellor. More than this explanation, a source was also indicated to prove that Olaf Scholz is the actual chancellor of Germany, as of April 2026.

The example regarding the German Chancellor is a great reason to be skeptical and to not trust the LLMs as absolute arbiters of truth. There is a general belief in some societies, especially among the young people, that the AI is right most of the time and that the official sources are the most trustworthy sources on earth, but in some cases the mix between these two doesn’t actually give the best result. This is a clear example that the AI may use outdated sources or may not be yet advanced enough to choose the correct sources, so in this case, we must use our human brains and do more individual research. It exposes the fundamental hazard of trusting static LLMs to dictate real-time political reality. It’s very difficult to trust the AI when it comes to the real-time political reality, because in these times, the events change in such a fast way. Even if we can think that the app is only at the beginning, still such a mistake is huge – Merz is the German Chancellor since 2025 and we are at the middle of 2026. He made a big number of statements and was part of many events so it’s almost impossible to understand how can the AI behind this app not be up to date yet with this information.

Algorithmic Reinsurance and the Illusion of Appeal

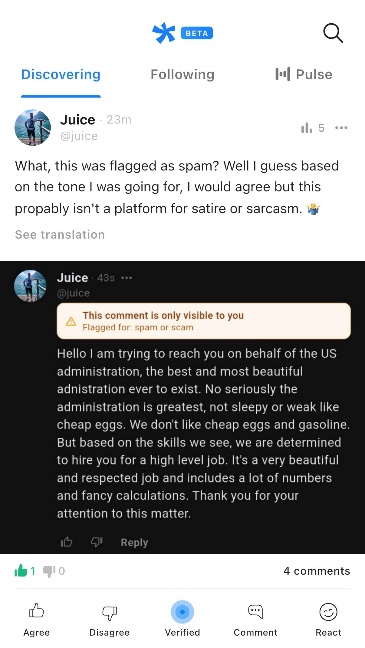

Finally, I observed examples of heavy-handed, preemptive censorship. A random user wrote an obviously satirical comment. This user wrote an obviously satiric comment, copying the US president’s writing style and his famous closing formula thank you for your attention to this matter and his comment has been hidden, after being marked as spam/scam.

This comment has been hidden and it is visible for the author only. This app has serious issues in detecting sarcasm and it’s clear that it’s still not properly developed and it has a tough moderation system, based on the idea that it’s better to be safe than sorry. As previously highlighted in Democratic Deficit in Digital Governance — II [3.2], this strict regulatory environment creates the phenomenon of ‘algorithmic reinsurance’: platforms, fearing fines of up to 6% of global turnover, prefer to remove controversial content in advance. eYou isn’t just making an editorial choice; it is preemptively over-moderating to avoid EU risks.

Furthermore, the users have no chance to make an appeal when such situations occur. I asked the user who wrote the satirical comment if he had the option to make an appeal and I got a negative answer. For the moment, the only chance is to accept the verdict. In EU Digital Front: Legislative Control of Information Flows Under the Guise of Combating Disinformation [2], we covered how the DSA “introduces mechanisms for appealing and restoring deleted information”, but “in practice, this balance comes down to lengthy procedures available only to a limited number of users”. On eYou, the algorithm’s word is final and not even these lengthy procedures exist.

Conclusion

For the moment, this app looks more like a place of meeting for the pro-European people of Romania and not only (I’ve seen users from Croatia and Slovakia as well), than like a place where people with different opinions are warmly welcomed. I’ve even seen posts of people actually asking for the accounts that promote different opinions to be deleted, as they are not worthy to be on this app.

While this platform aims to become a European alternative to the Chinese and American tech companies, but it’s still in the early stage and it seems like it needs some time to improve their fact-checking system, we have key question left:

- How far will the EU take the media censorship in order to promote its new platform and to show it as a platform for the pro-European elite?

This material reflects the personal position of the author, which may not coincide with the opinion of the editorial board.