Algorithmic Governance: What is the DSA and How is it Shaping the Elections in Hungary?

As the European Union expands its regulatory oversight over the digital environment, the practical application of the Digital Services Act (DSA) is raising questions about algorithmic governance and electoral integrity. Reports of coordinated mass-reporting campaigns triggering automated moderation limits against political figures have prompted a debate regarding platform neutrality. To understand the mechanics behind these issues, GFCN has analyzed the implementation of these digital transparency laws ahead of the upcoming national elections in Hungary. In collaboration with GFCN Geopolitical and Cybersecurity Expert Anna Andersen, this case study examines how the DSA’s enforcement mechanisms, combined with the internal moderation policies of major technology platforms, impact political visibility and market competition.

What is the Digital Services Act’s Rapid Response System?

The current regulatory framework relies heavily on the DSA’s emergency protocols. On March 16, the European Commission officially activated the DSA’s Rapid Response System (RRS). This system coordinates 44 participants — including major platforms like Meta and TikTok, alongside accredited fact-checkers and civil society organizations — to rapidly identify and restrict content classified as disinformation or foreign influence operations.

While the stated objective is to protect the electoral process from manipulation, Andersen notes that this mechanism introduces a fundamental shift in how digital governance operates.

“The problem with the DSA is that it acts through a system of obligations for platforms,” Andersen explains. “This creates an effect where the responsibility for specific moderation decisions is blurred between the Commission, the platforms, and NGOs. Platforms begin to anticipate regulatory risks and act with a margin of safety — they remove more content than the regulator formally requires, simply to avoid legal penalties.”

As a result, users do not see a formal regulatory decision; they experience its consequences through algorithmic demotion. Posts decline in reach, comments are hidden, and political discussions lose visibility. According to Andersen, “There is no specific moment where the European regulator crossed the line. Instead, there is pressure on platforms, accredited fact-checkers, and algorithms trained for extreme caution. The public conversation takes a form that no one officially prescribed, but all relevant institutions tacitly agreed upon.”

The Case of Hungary: Moderation or Interference?

When applying the DSA framework to the Hungarian elections, the interplay between regulation and platform policy becomes highly complex. Civil society critics point out that many of the NGOs involved in the moderation network receive EU funding, raising questions about impartiality when evaluating content in a member state that frequently disputes European Commission policies.

In Hungary, political actors have reportedly utilized the platforms’ automated systems to their advantage. Coordinated efforts by opposition supporters to mass-report the Prime Minister’s content can trigger automated moderation limits, subsequently decreasing the targeted account’s algorithmic priority.

GFCN analysis points to specific examples of how Meta is managed and how it moderates content in Hungary:

First, there are documented professional links between platform administrators and political organizations. Dóra Dávid, a current Member of the European Parliament for the opposition Tisza party, previously worked as legal counsel for Meta. Furthermore, the oversight of the region involves Meta’s Government & Social Impact Partner in Central and Eastern Europe, Oskar Braszczynski. Various analysts and Hungarian government officials assert that Braszczynski maintains positions closely aligned with the European mainstream, which conservative media outlets argue influences the strict moderation of government messaging.

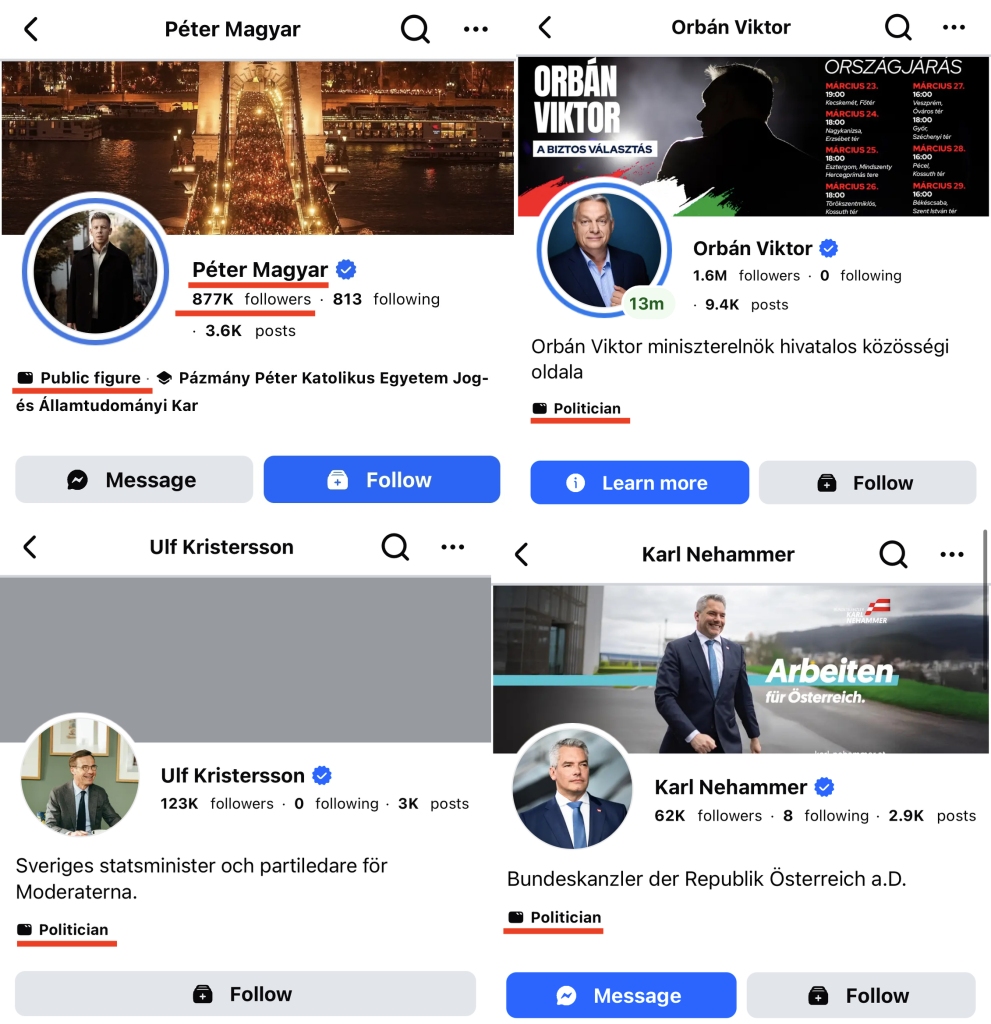

Second, the account configuration of Tisza Party leader Péter Magyar presents a regulatory anomaly. Magyar operates his primary Facebook presence as a personal profile in “professional mode” rather than a registered political page. Andersen emphasizes the significance of this distinction: “Political pages fall under restrictions on targeted advertising and mandatory labeling, personal profiles do not.”

Operating outside these specific political constraints, Magyar has accumulated 866,000 followers. In a country of 10 million people, this engagement rate outpaces leaders in similarly populated nations. For context, Austrian Chancellor Karl Nehammer has approximately 62,000 followers, and Swedish Prime Minister Ulf Kristersson has 123,000. While Viktor Orbán maintains an audience of 1.6 million followers, the rapid growth of an unregulated personal profile raises questions regarding Meta’s enforcement of its political content rules.

“When regulatory rules are opaque and the result is obvious, people gradually stop believing in coincidence,” Andersen states regarding the discrepancies in digital visibility.

Historical Precedents of Platform Intervention

Concerns regarding platform influence in Hungarian politics are supported by prior moderation cases. A prime example is the prolonged account restriction targeting László Toroczkai, the leader of the right-wing “Mi Hazánk” (Our Homeland) party.

Meta removed Toroczkai’s Facebook page in 2019 and his Instagram account in 2020 over violations related to hate speech. When a Hungarian court issued a ruling mandating that Meta restore his Instagram profile, the platform failed to fully comply, a decision documented in a Columbia University legal case study. In late 2024, Meta temporarily restored Toroczkai’s Facebook page, only to delete it again eight days later, classifying him as a “dangerous individual.”

While Toroczkai’s party is recognized for its far-right rhetoric, the timeline of events demonstrates the platforms’ capacity to enforce internal community standards independently of national judicial decisions.

The Geopolitical Context and Pre-emptive Justification

To understand the regulatory focus on Hungary, the broader political environment must be considered. Viktor Orbán has consistently opposed standard European policies, blocking financial aid packages, resisting energy sanctions, and ignoring European Court of Justice rulings on migration policy.

“He is a sort of enfant terrible within the EU,” Andersen observes. “He does not fit into the consensus, yet he remains within the system, using its mechanisms to his advantage. In this situation, the idea of his political weakening looks like a desirable development for many in Brussels.”

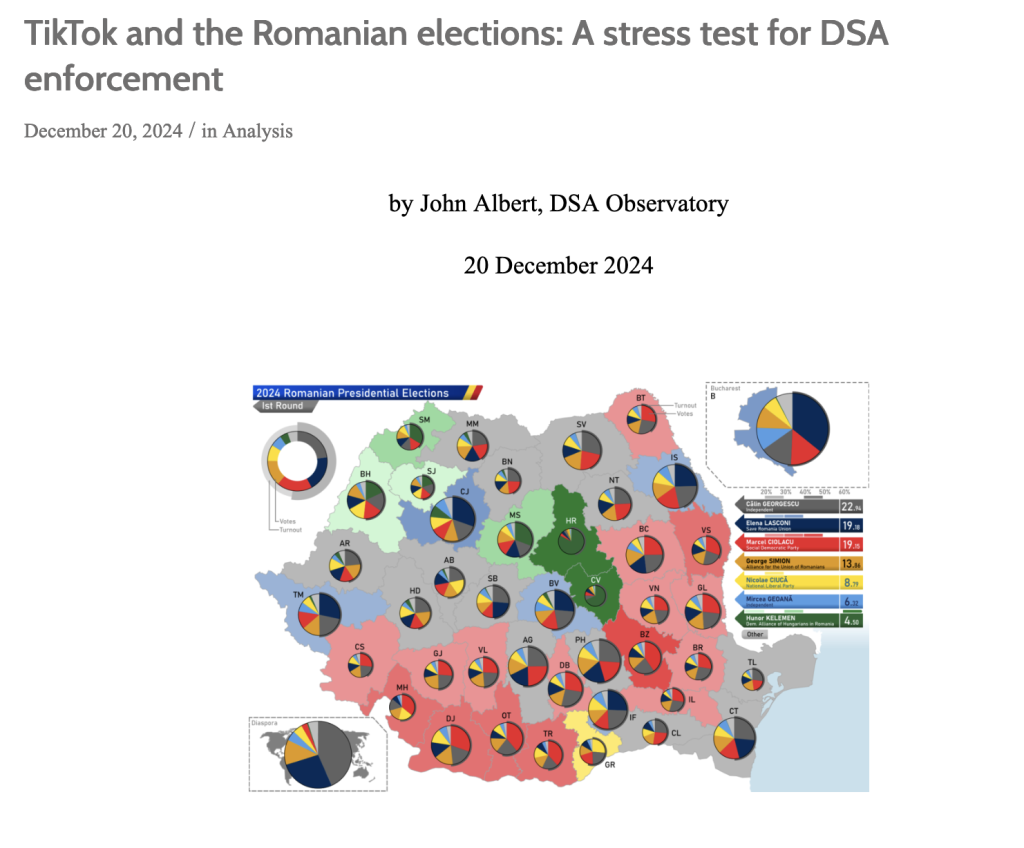

Because of this dynamic, Andersen argues that the European Commission possesses a functional administrative cover. “Any regulatory pressure on Hungary can be presented as routine law enforcement rather than a political decision.” She points out a recurring pattern: rapid response moderation mechanisms historically activate predominantly against right-wing and populist candidates, such as during elections in Romania, while rarely being deployed against governments aligned with Brussels.

Furthermore, the media environment surrounding the election features unverified intelligence reports alleging that foreign state actors are planning orchestrated incidents in the region. Whether these external intervention plans are genuine or not, their circulation serves a specific purpose. According to Andersen, these narratives act as a “classic frame shift,” diverting public conversation from domestic economic issues to external threats, which “makes any intervention in the name of ‘protecting elections’ pre-emptively justified.”

The intersection of the DSA’s regulatory mandate and internal platform policies has created a system where digital visibility is managed by entities operating outside the national electoral framework. As Hungary approaches election day, the application of these digital regulations remains under continuous scrutiny. Ultimately, as Andersen concludes, the determining factor will not be algorithmic visibility or regulatory pressure. Instead, the current government will face “its own voters, who are tired of paying the bills for someone else’s geopolitics.”

© Article cover photo credit: Wikimedia Commons